Learning

Learn how to generate Blog Posts, content writing, Articles with AI - BLOOM Language Model - True Open Source Alternative of GPT-3. It's also free. Just with a click of button from Google Colab you can generate Ai generated Blog posts and Articles.

This is good for content marketers to leverage AI to scale content writing.

Colab Code used here - https://github.com/amrrs/ai-content-gen-with-bloom

#gpt3 #aicontent

Self-attention and the transformer architecture have broken many benchmarks and enabled widespread progress in NLP. However, at this point neither researchers nor companies in industry (with a few exceptions) have leveraged them in a time series context. This talk will explore both the barriers and promise of self-attention models and transfer learning in a time series context. The talk will also look into why time series tasks (forecasting/prediction) have not had their BERT/Imagenet moment and what can be done to enable transfer learning on temporal data. The extremely limited COVID-19 time series forecasting dataset will be used as an example for the need to address these limited data scenarios and enable effective few-shot/transfer learning more generally.

Isaac Godfried is a machine learning engineer at Monster focusing on the data and machine learning platform. Prior to his current position Isaac worked on machine learning problems in both retail and healthcare. He also has participated in many Kaggle competitions. Isaac’s main focus is to remove barriers related to the use of deep learning in industry. Specifically, this involves researching techniques like transfer and meta learning for data constrained scenarios, designing tools to effectively track and manage experiments, and creating frameworks to deploy models at scale. In his spare time Isaac also conducts research in AI for good causes like medicine and climate.

👩🏼🚀Weights and Biases:

We’re always free for academics and open source projects. Email carey@wandb.com with any questions or feature suggestions.

- Blog: https://www.wandb.com/articles

- Gallery: See what you can create with W&B -https://app.wandb.ai/gallery

- Continue the conversation on our slack community - http://bit.ly/wandb-forum

In this video, Rasa Developer Advocate Rachael will talk about what GPT-3 is, what it can be used to do and some of its drawback and limitations.

- “Rasa Reading Group: GPT3 (Part 1)” https://youtu.be/I8IHla4cXHk

- "Rasa Reading Group: GPT3 (Part 2)" https://www.youtube.com/watch?v=9sL08TCh7T8

- “GPT-3: Careful First Impressions” by Vincent Warmerdam https://blog.rasa.com/gpt-3-ca....reful-first-impressi

If you want to reach out to us, feel free to leave a message at our community forum; https://forum.rasa.com/.

The Memo: https://lifearchitect.ai/memo/

====

AI Report Card: https://lifearchitect.ai/report-card/

GPT-3 paper: https://arxiv.org/abs/2005.14165

Other papers: https://lifearchitect.ai/papers

Mid-20220 report + video: https://lifearchitect.ai/the-sky-is-bigger/

What's in my AI: https://lifearchitect.ai/whats-in-my-ai/

====

Read more: https://lifearchitect.ai/

https://lifearchitect.ai/models/

Dr Alan D. Thompson is a world expert in artificial intelligence (AI), specialising in the augmentation of human intelligence, and advancing the evolution of ‘integrated AI’. Alan’s applied AI research and visualisations are featured across major international media, including citations in the University of Oxford’s debate on AI Ethics in December 2021.

https://lifearchitect.ai/

Music:

Under licence.

Liborio Conti - Looking Forward (The Memo outro)

https://no-copyright-music.com/

ChatGPT is a variant of the GPT (Generative Pre-training Transformer) language model specifically designed for chatbot applications. It was developed by OpenAI and is trained to generate human-like text responses given a prompt or context. It can be used to build chatbots that can carry on conversations with users in a natural and engaging way.

This videos demonstrates a few things that can be done with chatgpt in Tamil. Particularly, with respect to coding - fixing bugs, code explanation, generating methods etc,.

chatgpt link - https://chat.openai.com/chat

Github CodeLink - https://github.com/LogicFirstTamil

---------------------------------------- courses and playlists --------------------------------------------------

HTML and CSS: https://www.youtube.com/playli....st?list=PLYM2_EX_xVv

SQL: https://www.youtube.com/playli....st?list=PLYM2_EX_xVv

DS and ALGO in C/CPP: https://www.youtube.com/watch?v=QQBVNwA9rDI&list=PLYM2_EX_xVvVMXkQt4qqosJTplBq5v5oX

DS and ALGO in Java: https://www.youtube.com/watch?v=t2U989oaI1Q&list=PLYM2_EX_xVvX7_AmNY-Deacp3rT3MIXnE

Python Full Course with game: https://www.youtube.com/watch?v=BiDOehqG68g

Java Playlist: https://www.youtube.com/playli....st?list=PLYM2_EX_xVv

Java one video: https://www.youtube.com/watch?v=qOWPCPCDRZs&t=2751s

C Interview program playlist: https://www.youtube.com/playli....st?list=PLYM2_EX_xVv

C programming in one video: https://www.youtube.com/watch?v=JAy56OH58Y4&t=17269s

C programming playlist: https://www.youtube.com/watch?v=xKGg8UfR0mM&list=PLYM2_EX_xVvU7N5Lcp1SjKnGN5e6riUoj

C++ Playlist link: https://www.youtube.com/playli....st?list=PLYM2_EX_xVv

English channel link: https://www.youtube.com/channe....l/UCFhHB5_2UkzB4V5iL

github: https://github.com/krishnaik06..../Huggingfacetransfor

In this tutorial, we will show you how to fine-tune a pretrained model from the Transformers library. In TensorFlow, models can be directly trained using Keras and the fit method. In PyTorch, there is no generic training loop so the 🤗 Transformers library provides an API with the class Trainer to let you fine-tune or train a model from scratch easily.

---------------------------------------------------------------------------------------------------------------------------------------------------------------

⭐ Kite is a free AI-powered coding assistant that will help you code faster and smarter. The Kite plugin integrates with all the top editors and IDEs to give you smart completions and documentation while you’re typing. I've been using Kite for a few months and I love it! https://www.kite.com/get-kite/?utm_medium=referral&utm_source=youtube&utm_campaign=krishnaik&utm_content=description-only

Subscribe my vlogging channel

https://www.youtube.com/channe....l/UCjWY5hREA6FFYrthD

Please donate if you want to support the channel through GPay UPID,

Gpay: krishnaik06@okicici

Telegram link: https://t.me/joinchat/N77M7xRvYUd403DgfE4TWw

Please join as a member in my channel to get additional benefits like materials in Data Science, live streaming for Members and many more

https://www.youtube.com/channe....l/UCNU_lfiiWBdtULKOw

Connect with me here:

Twitter: https://twitter.com/Krishnaik06

Facebook: https://www.facebook.com/krishnaik06

instagram: https://www.instagram.com/krishnaik06

GPT-3 is super intelligent NLP deep learning model. In order to understand GPT-3 or later version, we should understand fundamental basic of it, and this video is covering the basic of GPT which covers before the transformer, and summary of transformer and the basic fundamental of GPT by looking at GPT-1.

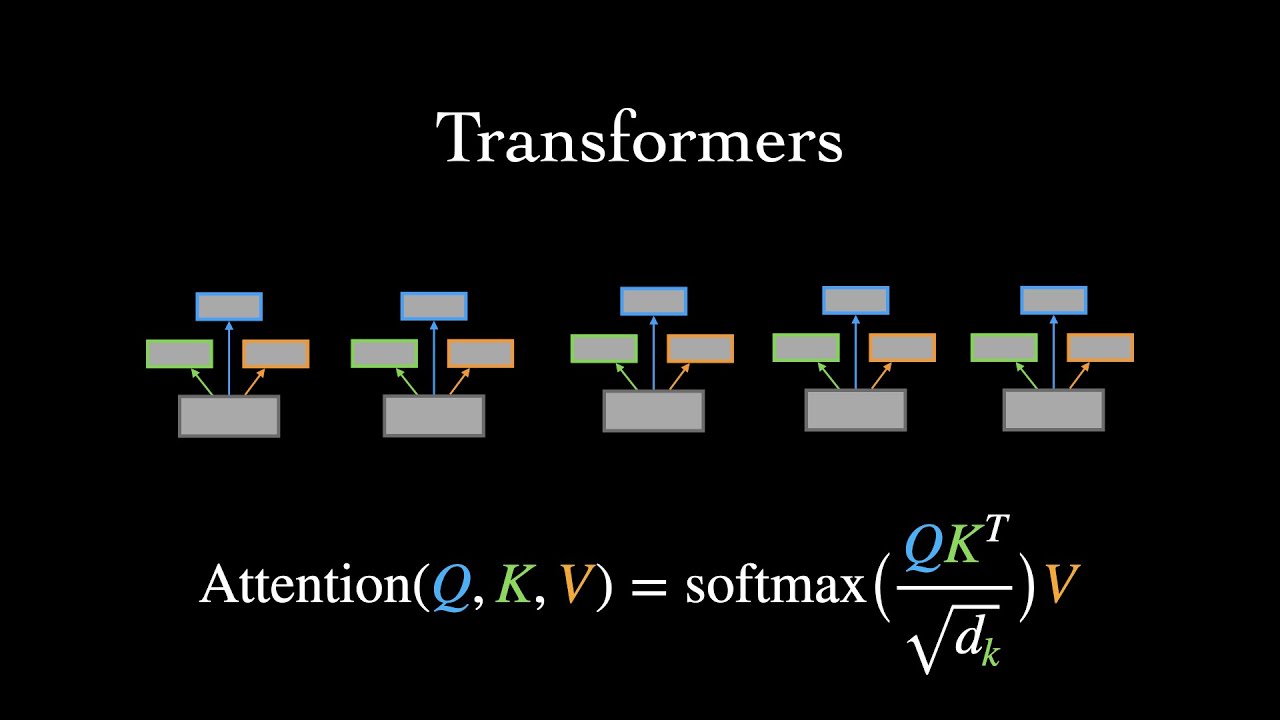

This short tutorial covers the basics of the Transformer, a neural network architecture designed for handling sequential data in machine learning.

Timestamps:

0:00 - Intro

1:18 - Motivation for developing the Transformer

2:44 - Input embeddings (start of encoder walk-through)

3:29 - Attention

6:29 - Multi-head attention

7:55 - Positional encodings

9:59 - Add & norm, feedforward, & stacking encoder layers

11:14 - Masked multi-head attention (start of decoder walk-through)

12:35 - Cross-attention

13:38 - Decoder output & prediction probabilities

14:46 - Complexity analysis

16:00 - Transformers as graph neural networks

Original Transformers paper:

Attention is All You Need - https://arxiv.org/abs/1706.03762

Other papers mentioned:

(GPT-3) Language Models are Few-Shot Learners - https://arxiv.org/abs/2005.14165

(DALL-E) Zero-Shot Text-to-Image Generation - https://arxiv.org/abs/2102.12092

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding - https://arxiv.org/abs/1810.04805

Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity - https://arxiv.org/abs/2101.03961

Finetuning Pretrained Transformers into RNNs - https://arxiv.org/abs/2103.13076

Efficient Transformers: A Survey - https://arxiv.org/abs/2009.06732

Attention is Not All You Need: Pure Attention Loses Rank Doubly Exponentially with Depth - https://arxiv.org/abs/2103.03404

Do Transformer Modifications Transfer Across Implementations and Applications? - https://arxiv.org/abs/2102.11972

Gradient Flow in Recurrent Nets: the Difficulty of Learning Long-Term Dependencies - https://ml.jku.at/publications/older/ch7.pdf

Transformers are Graph Neural Networks (blog post) - https://thegradient.pub/transf....ormers-are-graph-neu

Video style inspired by 3Blue1Brown

Music: Trinkets by Vincent Rubinetti

Links:

YouTube: https://www.youtube.com/ariseffai

Twitter: https://twitter.com/ari_seff

Homepage: https://www.ariseff.com

If you'd like to help support the channel (completely optional), you can donate a cup of coffee via the following:

Venmo: https://venmo.com/ariseff

PayPal: https://www.paypal.me/ariseff

14 Cool applications just built on top of OpenAI's GPT-3 (generative predictive transformer) API (currently in private beta).

—

► Remember to Like, Comment, and Subscribe!

—

Connect with me:

Twitter - http://www.twitter.com/bakztfuture

Instagram - http://www.instagram.com/bakztfuture

Github - http://www.github.com/bakztfuture

Feel free to send me an email or just say hello:

bakztfuture@gmail.com

AI Papers Reading and Coding - Transformer: Attention Is All You Need

Hôm nay chúng ta sẽ đọc và lập trình bài báo Transformer: Attention Is All You Need. Đây là video diễn giải phần tiếp theo: Decoder. Một ứng dụng nổi tiếng của Transformer Decoder chính là mô hình sinh GPT, bạn có thể sinh hình quả bơ từ một câu. :D

Đây chính là mô hình xương sống cho những mô hình Deep Learning trong xử lý ngôn ngữ tự nhiên hiện tại.

Code bài báo: https://github.com/bangoc123/transformer

Đây là nội dung trong chuỗi video Papers-Videos-Code: https://protonx.ai/papers-videos-code/

Want to get your hands on GPT3 but cbbd waiting for access?

Need to kick off some AI powered text gen ASAP?

Want to write a love song using AI?

I got you!

In this video, you'll learn how to leverage GPT Neo, a GPT3 architecture clone, which has been trained on 2.7 BILLION parameters to generate text and code. You'll learn how to get setup and leverage the model for a whole range of use cases in just 4 lines of code!

In this video you’ll learn how to:

1. Install GPT Neo a 2.7B Parameter Language Model

2. Generate Python Code using GPT Neo

3. Generate text using GPT Neo and Hugging Face Transformers

GET THE CODE FROM THE VIDEO: https://github.com/nicknochnack/GPTNeo

Code from the previous tutorial:

https://github.com/nicknochnack/MediaPipeHandPose

Chapters:

0:00 - Start

1:15 - How it Works

2:45 - Install PyTorch and Transformers

5:08 - Setup GPT Neo Pipeline

8:08 - Generate Text from a Prompt

14:46 - Export Text to File

17:48 - Wrap Up

Oh, and don't forget to connect with me!

LinkedIn: https://bit.ly/324Epgo

Facebook: https://bit.ly/3mB1sZD

GitHub: https://bit.ly/3mDJllD

Patreon: https://bit.ly/2OCn3UW

Join the Discussion on Discord: https://bit.ly/3dQiZsV

Happy coding!

Nick

P.s. Let me know how you go and drop a comment if you need a hand!

Simpletransformer library is based on the Transformers library by HuggingFace. Simple Transformers lets you quickly train and evaluate Transformer models. Only 3 lines of code are needed to initialize a model, train the model, and evaluate a model.

github: https://github.com/krishnaik06/Trnasformer-Bert

simple transformer: https://simpletransformers.ai/docs/qa-specifics/

simple transformer github: https://github.com/ThilinaRaja....pakse/simpletransfor

⭐ Kite is a free AI-powered coding assistant that will help you code faster and smarter. The Kite plugin integrates with all the top editors and IDEs to give you smart completions and documentation while you’re typing. I've been using Kite for a few months and I love it! https://www.kite.com/get-kite/?utm_medium=referral&utm_source=youtube&utm_campaign=krishnaik&utm_content=description-only

Subscribe my vlogging channel

https://www.youtube.com/channe....l/UCjWY5hREA6FFYrthD

Please donate if you want to support the channel through GPay UPID,

Gpay: krishnaik06@okicici

Telegram link: https://t.me/joinchat/N77M7xRvYUd403DgfE4TWw

Please join as a member in my channel to get additional benefits like materials in Data Science, live streaming for Members and many more

https://www.youtube.com/channe....l/UCNU_lfiiWBdtULKOw

Connect with me here:

Twitter: https://twitter.com/Krishnaik06

Facebook: https://www.facebook.com/krishnaik06

instagram: https://www.instagram.com/krishnaik06

AI/ML has been witnessing a rapid acceleration in model improvement in the last few years. The majority of the state-of-the-art models in the field are based on the Transformer architecture. Examples include models like BERT (which when applied to Google Search, resulted in what Google calls "one of the biggest leaps forward in the history of Search") and OpenAI's GPT2 and GPT3 (which are able to generate coherent text and essays).

This video by the author of the popular "Illustrated Transformer" guide will introduce the Transformer architecture and its various applications. This is a visual presentation accessible to people with various levels of ML experience.

Intro (0:00)

The Architecture of the Transformer (4:18)

Model Training (7:11)

Transformer LM Component 1: FFNN (10:01)

Transformer LM Component 2: Self-Attention(12:27)

Tokenization: Words to Token Ids (14:59)

Embedding: Breathe meaning into tokens (19:42)

Projecting the Output: Turning Computation into Language (24:11)

Final Note: Visualizing Probabilities (25:51)

The Illustrated Transformer:

https://jalammar.github.io/ill....ustrated-transformer

Simple transformer language model notebook:

https://github.com/jalammar/ja....lammar.github.io/blo

Philosophers On GPT-3 (updated with replies by GPT-3):

https://dailynous.com/2020/07/....30/philosophers-gpt-

-----

Twitter: https://twitter.com/JayAlammar

Blog: https://jalammar.github.io/

Mailing List: http://eepurl.com/gl0BHL

More videos by Jay:

Jay's Visual Intro to AI

https://www.youtube.com/watch?v=mSTCzNgDJy4

How GPT-3 Works - Easily Explained with Animations

https://www.youtube.com/watch?v=MQnJZuBGmSQ

Why didn't OpenAI release their "Unicorn" GPT2 large transformer? Rob Miles suggests why it might not just be a a PR stunt.

Unicorn AI: https://youtu.be/89A4jGvaaKk

Unicorn AI (More Examples): https://youtu.be/p-6F4rhRYLQ

Generative Adversarial Networks (GANs): https://www.youtube.com/watch?v=5oXyibEgJr0

More from Rob Miles: http://bit.ly/Rob_Miles_YouTube

Thanks to Nottingham Hackspace for providing the filming location: http://bit.ly/notthack

https://www.facebook.com/computerphile

https://twitter.com/computer_phile

This video was filmed and edited by Sean Riley.

Computer Science at the University of Nottingham: https://bit.ly/nottscomputer

Computerphile is a sister project to Brady Haran's Numberphile. More at http://www.bradyharan.com

Comparing the recently released GPT-J-6B from Eleuther and Curie from Open AI on a few supposedly simple prompts.

__________

My courses:

Neural Networks with Tensorflow: http://bit.ly/tensorflownets

Machine Learning with Scikit-Learn and Python: http://bit.ly/mlpractical

Artificial Neural Nets with Neurolab: https://bit.ly/artificialnets

Use AI to enhance your work: http://bit.ly/aitotherescue

Training and coaching and contract work:

https://cristivlad.com/contact

Connect with me:

Linkedin: https://www.linkedin.com/in/cristivlad/

Twitter: @cristivlad25

Support this channel:

https://www.buymeacoffee.com/cristivlad

https://www.patreon.com/cristivlad

Coupons:

Paperspace credit: https://paperspace.io/&R=FMXH1BN

DigitalOcean credit: https://m.do.co/c/efe4365e60bd

Use AI to enhance your work: http://bit.ly/aitotherescue

__________

This video is for educational purposes only.

__________

At the time of publishing the video, all non-original content has been appropriately cited and referenced.

However, if at any later time copyright issues or other issues might arise, please reach me out first before filing a claim with YouTube and I will gladly work towards solving these issues, whatever they may be.

Contact details are on the channel's "About" page!

Please consider "fair use" before filing a claim. Thank You!