Learning

#graphgpt #gpt3 #machinelearning

GraphGPT converts unstructured natural language into a knowledge graph. Pass in the synopsis of your favorite movie, a passage from a confusing Wikipedia page, or a transcript from a video to generate a graph visualization of entities and their relationships. A classic use case of prompt engineering.

Github: https://github.com/varunshenoy/GraphGPT

Demo: https://graphgpt.vercel.app/

Enjoy reading articles? then consider subscribing to Medium membership, it just 5$ a month for unlimited access to all free/paid content.

Subscribe now - https://prakhar-mishra.medium.com/membership

*********************************************

If you want to support me financially which totally optional and voluntary :) ❤️

You can consider buying me chai ( because i don't drink coffee :) ) at https://www.buymeacoffee.com/TechvizCoffee

*********************************************

⏩ IMPORTANT LINKS

Research Paper Summaries: https://www.youtube.com/watch?v=ykClwtoLER8&list=PLsAqq9lZFOtWUz1WEoJ3GXw197LD7BxMc

*********************************************

⏩ Youtube - https://youtube.com/channel/UC....oz8NrwgL7U9535VNc0mR

⏩ LinkedIn - https://linkedin.com/in/prakhar21

⏩ Medium - https://medium.com/@prakhar.mishra

⏩ GitHub - https://github.com/prakhar21

*********************************************

⏩ Please feel free to share out the content and subscribe to my channel - https://youtube.com/channel/UC....oz8NrwgL7U9535VNc0mR?sub_confirmation=1

Tools I use for making videos :)

⏩ iPad - https://tinyurl.com/y39p6pwc

⏩ Apple Pencil - https://tinyurl.com/y5rk8txn

⏩ GoodNotes - https://tinyurl.com/y627cfsa

#techviz #datascienceguy #deeplearning #ai #transformers #knowledgegraph #promptengineering

In this video, I go over how to download and run the open-source implementation of GPT3, called GPT Neo. This model is 2.7 billion parameters, which is the same size as GPT3 Ada. The results are very good and are a large improvement over GPT-2. I am excited to play around with this model more and for the future of even larger NLP models.

Notebook https://github.com/mallorbc/GPTNeo_notebook

GPT Neo github https://github.com/EleutherAI/gpt-neo (use the first release tag)

GPT Neo HuggingFace docs https://huggingface.co/transfo....rmers/model_doc/gpt_

A useful article about transformer parameters https://huggingface.co/blog/how-to-generate

00:00 - GPT3 Background

01:07 - GPT3 Interview

02:06 - GPT Neo Github

03:14 - GPT Neo HuggingFace

03:52 - Setting up Anaconda and Jupyter

05:05 - Starting the Jupyter notebook

06:14 - Installing dependencies in the notebook

06:58 - Importing needed dependencies

07:33 - Selecting what GPT model to use

08:45 - Checking our computer hardware

09:36 - Loading the tokenizer

09:55 - Giving our inputs

10:39 - Generating the tokens with the model

11:42 - Decoding and reading the result

13:22 - Reflections on Transformers

14:26 - Outro questions future work

#gpt3 #openai #gpt-3

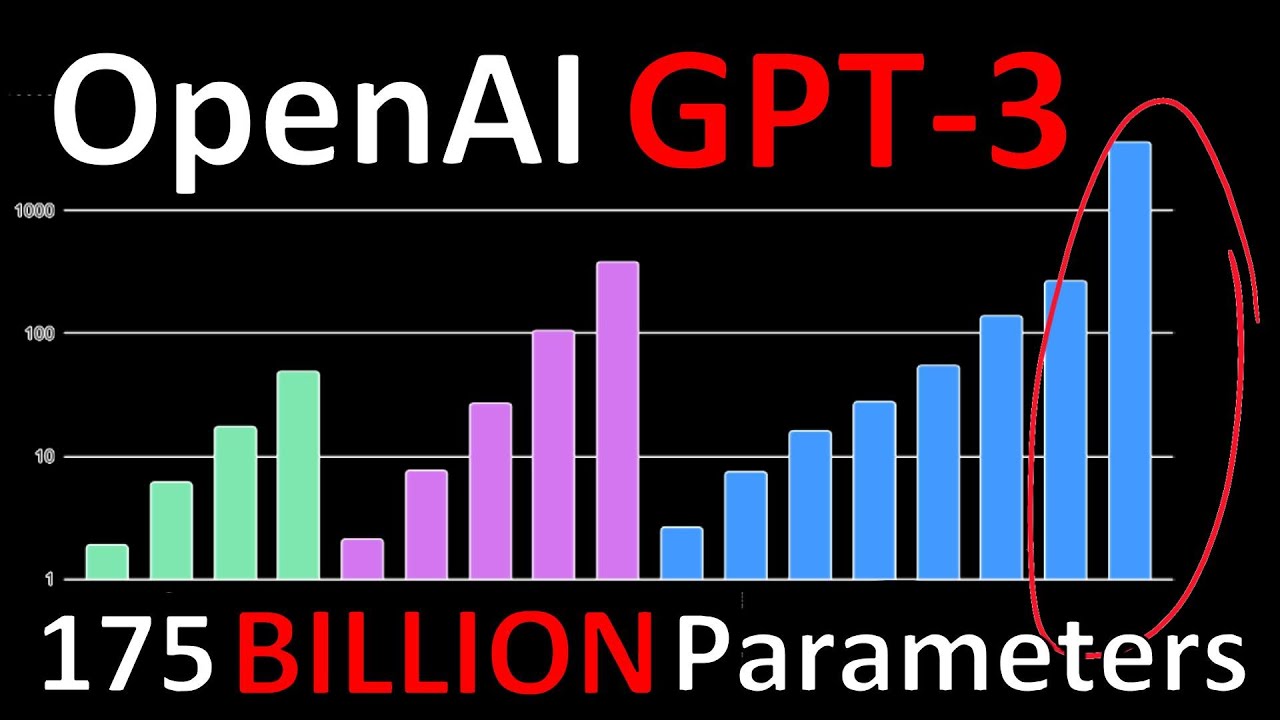

How far can you go with ONLY language modeling? Can a large enough language model perform NLP task out of the box? OpenAI take on these and other questions by training a transformer that is an order of magnitude larger than anything that has ever been built before and the results are astounding.

OUTLINE:

0:00 - Intro & Overview

1:20 - Language Models

2:45 - Language Modeling Datasets

3:20 - Model Size

5:35 - Transformer Models

7:25 - Fine Tuning

10:15 - In-Context Learning

17:15 - Start of Experimental Results

19:10 - Question Answering

23:10 - What I think is happening

28:50 - Translation

31:30 - Winograd Schemes

33:00 - Commonsense Reasoning

37:00 - Reading Comprehension

37:30 - SuperGLUE

40:40 - NLI

41:40 - Arithmetic Expressions

48:30 - Word Unscrambling

50:30 - SAT Analogies

52:10 - News Article Generation

58:10 - Made-up Words

1:01:10 - Training Set Contamination

1:03:10 - Task Examples

https://arxiv.org/abs/2005.14165

https://github.com/openai/gpt-3

Abstract:

Recent work has demonstrated substantial gains on many NLP tasks and benchmarks by pre-training on a large corpus of text followed by fine-tuning on a specific task. While typically task-agnostic in architecture, this method still requires task-specific fine-tuning datasets of thousands or tens of thousands of examples. By contrast, humans can generally perform a new language task from only a few examples or from simple instructions - something which current NLP systems still largely struggle to do. Here we show that scaling up language models greatly improves task-agnostic, few-shot performance, sometimes even reaching competitiveness with prior state-of-the-art fine-tuning approaches. Specifically, we train GPT-3, an autoregressive language model with 175 billion parameters, 10x more than any previous non-sparse language model, and test its performance in the few-shot setting. For all tasks, GPT-3 is applied without any gradient updates or fine-tuning, with tasks and few-shot demonstrations specified purely via text interaction with the model. GPT-3 achieves strong performance on many NLP datasets, including translation, question-answering, and cloze tasks, as well as several tasks that require on-the-fly reasoning or domain adaptation, such as unscrambling words, using a novel word in a sentence, or performing 3-digit arithmetic. At the same time, we also identify some datasets where GPT-3's few-shot learning still struggles, as well as some datasets where GPT-3 faces methodological issues related to training on large web corpora. Finally, we find that GPT-3 can generate samples of news articles which human evaluators have difficulty distinguishing from articles written by humans. We discuss broader societal impacts of this finding and of GPT-3 in general.

Authors: Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, Dario Amodei

Links:

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

BitChute: https://www.bitchute.com/channel/yannic-kilcher

Minds: https://www.minds.com/ykilcher

In this short clip, Dr. Craig Booth explains what a Large Language Model (LLM) is and how they work. LLMs are the foundational technology category behind models like GPT3, BERT, and other generative AI models.

To watch the complete webinar, go to: https://www.youtube.com/watch?v=LgtDe7ufSRA

In this video I show you how to setup and install GPT4All and create local chatbots with GPT4All and LangChain! Privacy concerns around sending customer and organizational data to OpenAI APIs is a huge issue in AI right now but using local models like GPT4All, LLaMa, Alpaca etc can be a viable alternative.

I did some research and figured out how to make your own version of ChatGPT trained on your own data (PDFs, docs etc) using only open source models like GPT4All which is based on GPT-J or LLaMa depending on the version you use. LangChain has great support for models like these so in this video we use LangChain to integrate LLaMa embeddings with GPT4All and a FAISS local vector database to store out documents.

My AI Consulting Services 👋🏼

Want to speak with me about your next big project? Book here:

https://calendly.com/liamottley/consulting-call

Join My Newsletter! 🗞️

Get your fix of the latest AI news direct to your inbox:

https://morningsideai.beehiiv.com/

My AI Entrepreneurship Community 📈

Find top shelf co-founders for your next AI venture:

http://discord.gg/dAa78Zj7yj

My Links 🔗

👉🏻 Subscribe: https://www.youtube.com/channe....l/UCui4jxDaMb53Gdh-A

👉🏻 Twitter: https://twitter.com/liamottley_

👉🏻 LinkedIn: https://www.linkedin.com/in/liamottley/

👉🏻 Instagram: https://instagram.com/liamottley

Mentioned in the video:

Code: https://github.com/wombyz/gpt4....all_langchain_chatbo

GPT4ALL (Pre-Converted):

https://huggingface.co/mrgaang..../aira/blob/main/gpt4

Embedding Model:

https://huggingface.co/Pi3141/....alpaca-native-7B-ggm

Mac User Troubleshooting: https://docs.google.com/docume....nt/d/1JDMBTOjbRtJo49

Timestamps:

0:00 - What is GPT4All?

2:28 - Setup & Install

7:13 - LangChain + GPT4All

11:42 - Custom Knowledge Query

19:42 - Chatbot App

24:19 - My Thoughts on GPT4All

About Me 👋🏼

Hi! My name is Liam Ottley and I’m an AI developer and entrepreneur, founder of my own AI development company: Morningside AI (https://morningside.ai). You’ve found my YouTube channel where I help other entrepreneur’s wrap their heads around AI with tutorials on how to get started with building AI apps!

With developments in AI moving so fast, I’m constantly working on my own projects and those of clients and sharing my learnings with my viewers 2-3x per week.

I don’t have anything to sell you, my only hope is that one day some of you will learn enough from my videos to see an exciting opportunity in the AI space and want to work with my development company to make it a reality.

In this talk, we will cover the basics of Reinforcement Learning from Human Feedback (RLHF) and how this technology is being used to enable state-of-the-art ML tools like ChatGPT. Most of the talk will be an overview of the interconnected ML models and cover the basics of Natural Language Processing and RL that one needs to understand how RLHF is used on large language models. It will conclude with open question in RLHF.

RLHF Blogpost: https://huggingface.co/blog/rlhf

The Deep RL Course: https://hf.co/deep-rl-course

Slides from this talk: https://docs.google.com/presen....tation/d/1eI9PqRJTCF

Nathan Twitter: https://twitter.com/natolambert

Thomas Twitter: https://twitter.com/thomassimonini

Nathan Lambert is a Research Scientist at HuggingFace. He received his PhD from the University of California, Berkeley working at the intersection of machine learning and robotics. He was advised by Professor Kristofer Pister in the Berkeley Autonomous Microsystems Lab and Roberto Calandra at Meta AI Research. He was lucky to intern at Facebook AI and DeepMind during his Ph.D. Nathan was was awarded the UC Berkeley EECS Demetri Angelakos Memorial Achievement Award for Altruism for his efforts to better community norms.

Did you ever wonder how to create a BERT or GPT2 tokenizer in your own language or on your own corpus? This video will teach you how to do this with any tokenizer of the 🤗 Transformers library.

This video is part of the Hugging Face course: http://huggingface.co/course

Open in colab to run the code samples:

https://colab.research.google.....com/github/huggingfa

Related videos:

- Building a new tokenizer: https://youtu.be/MR8tZm5ViWU

Don't have a Hugging Face account? Join now: http://huggingface.co/join

Have a question? Checkout the forums: https://discuss.huggingface.co/c/course/20

Subscribe to our newsletter: https://huggingface.curated.co/

This demo shows how to run large AI models from #huggingface on a Single GPU without Out of Memory error. Take a OPT-175B or BLOOM-176B parameter model .These are large language models and often require very high processing machine or multi-GPU, but thanks to bitsandbytes, in just a few tweaks to your code, you can run these large models on single node.

In this tutorial, we'll see 3 Billion parameter BLOOM AI model (loaded from Hugging Face) and #LLM inference on Google Colab (Tesla T4) without OOM.

This is brilliant! Kudos to the team.

bitsandbytes - https://github.com/TimDettmers/bitsandbytes

Google Colab Notebook - https://colab.research.google.....com/drive/1qOjXfQIAU

In this video, I will show you how to install PrivateGPT on your local computer. PrivateGPT uses LangChain to combine GPT4ALL and LlamaCppEmbeddeing for information retrieval from documents in different formats including PDF, TXT and CVS. The list of file types can be easily extended with PrivateGPT.

LINKS:

PrivateGPT GitHub: https://github.com/imartinez/privateGPT

Open Embeddings Video: https://youtu.be/ogEalPMUCSY

LLM Playlist: https://www.youtube.com/playli....st?list=PLVEEucA9MYh

▬▬▬▬▬▬▬▬▬▬▬▬▬▬ CONNECT ▬▬▬▬▬▬▬▬▬▬▬

☕ Buy me a Coffee: https://ko-fi.com/promptengineering

|🔴 Join the Patreon: Patreon.com/PromptEngineering

🦾 Discord: https://discord.com/invite/t4eYQRUcXB

▶️ Subscribe: https://www.youtube.com/@engin....eerprompt?sub_confir

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

All Interesting Videos:

Everything LangChain: https://www.youtube.com/playli....st?list=PLVEEucA9MYh

Everything LLM: https://youtube.com/playlist?l....ist=PLVEEucA9MYhNF5-

Everything Midjourney: https://youtube.com/playlist?l....ist=PLVEEucA9MYhMdrd

AI Image Generation: https://youtube.com/playlist?l....ist=PLVEEucA9MYhPVgY

Get notified of the free Python course on the home page at https://www.coursesfromnick.com

Sign up for the Full Stack course here and use YOUTUBE50 to get 50% off:

https://www.coursesfromnick.com/bundl...

Hopefully you enjoyed this video.

💼 Find AWESOME ML Jobs: https://www.jobsfromnick.com

Oh, and don't forget to connect with me!

LinkedIn: https://bit.ly/324Epgo

Facebook: https://bit.ly/3mB1sZD

GitHub: https://bit.ly/3mDJllD

Patreon: https://bit.ly/2OCn3UW

Join the Discussion on Discord: https://bit.ly/3dQiZsV

Happy coding!

Nick

Introduction to the world of LLM (Large Language Models) in April 2023. With detailed explanation of GPT-3.5, GPT-4, T5, Flan-T5 to LLama, Alpaca and KOALA LLM, plus dataset sources and configurations.

Including ICL (in-context learning), adapter fine-tuning, PEFT LoRA and classical fine-tuning of LLM explained. When to choose what type of data set for what LLM job?

Addendum: Beautiful, new open-source "DOLLY 2.0" LLM was not published at time of recording, therefore a special link to my video explaining DOLLY 2:

https://youtu.be/kZazs6V3314

A comprehensive LLM /AI ecosystem is essential for the creation and implementation of sophisticated AI applications. It facilitates the efficient processing of large-scale data, the development of complex machine learning models, and the deployment of intelligent systems capable of performing complex tasks.

As the field of AI continues to evolve and expand, the importance of a well-integrated and cohesive AI ecosystem cannot be overstated.

A complete overview of today's LLM and how you can train them for your needs.

#naturallanguageprocessing

#LargeLanguageModels

#chatgpttutorial

#finetuning

#finetune

#ai

#introduction

#overview

#chatgpt

Dead simple way to run LLaMA on your computer. - https://cocktailpeanut.github.io/dalai/

LLaMa Model Card - https://github.com/facebookres....earch/llama/blob/mai

LLaMa Announcement - https://ai.facebook.com/blog/l....arge-language-model-

❤️ If you want to support the channel ❤️

Support here:

Patreon - https://www.patreon.com/1littlecoder/

Ko-Fi - https://ko-fi.com/1littlecoder

I am excited to bring you a comprehensive step-by-step guide on how to fine-tune the newly announced MPT-7B parameters model using just a single GPU. This remarkable model is not only open source but also commercializable, making it a valuable tool for a wide range of natural language processing (NLP) tasks. MPT token size beat GPT4 and also it outperformed many available language models like GPT-J, LLAMA and etc.

Don't forget to subscribe to our channel and hit the notification bell to stay updated with the latest tutorials and developments in the field of AI. Let's dive in and empower your AI projects with the limitless potential of MPT-7B!

Other commercializable LLM: https://github.com/eugeneyan/open-llms

Notebook: https://github.com/antecessor/mpt_7b_fine_tuning

LangChain: https://github.com/hwchase17/langchain

Large language models (LLMs) are emerging as a transformative technology, enabling developers to build applications that they previously could not. But using these LLMs in isolation is often not enough to create a truly powerful app - the real power comes when you can combine them with other sources of computation or knowledge.

This library is aimed at assisting in the development of those types of applications. Common examples of these types of applications include:

GPT Index: https://github.com/jerryjliu/gpt_index

Context

LLMs are a phenomenonal piece of technology for knowledge generation and reasoning.

A big limitation of LLMs is context size (e.g. Davinci's limit is 4096 tokens. Large, but not infinite).

The ability to feed "knowledge" to LLMs is restricted to this limited prompt size and model weights.

Proposed Solution

At its core, GPT Index contains a toolkit of index data structures designed to easily connect LLM's with your external data. GPT Index helps to provide the following advantages:

Remove concerns over prompt size limitations.

Abstract common usage patterns to reduce boilerplate code in your LLM app.

Provide data connectors to your common data sources (Google Docs, Slack, etc.).

Provide cost transparency + tools that reduce cost while increasing performance.

❤️ If you want to support what we are doing ❤️

Support here:

Patreon - https://www.patreon.com/1littlecoder/

Ko-Fi - https://ko-fi.com/1littlecoder

If you've been using ChatGPT, you already know the mindblowing potential A.I has! But ChatGPT is only scratching the surface. There is so much more we can do with A.I. to make us more productive and - by the end of the day - generate more revenue.

Let me show you 5 ways to use A.I. to run your online business.

00:00 Introduction

01:31 Idea Generation (ChatGPT)

03:19 Script Writing (GPT-3)

06:12 Creating Content (FeedHive)

08:46 AI Generated Thumbnails (Stable Diffusion)

11:57 Writing Software (ChatGPT + GitHub Copilot)

14:03 What's Next?

#chatgpt #gpt3 #feedhive #stablediffusion #ai #makingmoneyonline

------------------------------------------------------------------------------------------------

⭐ Try FeedHive

Try FeedHive for FREE in 7 days using the link below.

When you sign up, use this promo code:

→ FHYT25

This will give you 25% OFF on any plan in the first year.

Get started here → https://simonl.ink/feedhive25off

------------------------------------------------------------------------------------------------

Resources:

Learn how to fine-tune a GPT-3 model:

https://simonl.ink/gpt3finetuning

Learn how to train a Stable Diffusion model:

https://simonl.ink/stablediffusiontraining

------------------------------------------------------------------------------------------------

Check out these videos next:

7 AI SaaS Ideas You Can Start In 2023

https://www.youtube.com/watch?v=Hdp30KBXK40

5 Smart SaaS Pricing Hacks to Increase MRR

https://www.youtube.com/watch?v=AbVwuTXpf6E

------------------------------------------------------------------------------------------------

Follow me here for more content:

✉️ NEWSLETTER ‧ https://simonl.ink/newsletter

📱 TIKTOK ‧ https://simonl.ink/tiktok

📷 INSTAGRAM ‧ https://simonl.ink/instagram

🐦 TWITTER ‧ https://simonl.ink/twitter

🌐 LINKEDIN ‧ https://simonl.ink/linkedin

![How to Create LOCAL Chatbots with GPT4All and LangChain [Full Guide]](https://i.ytimg.com/vi/4p1Fojur8Zw/maxresdefault.jpg)

![PrivateGPT: Chat to your FILES OFFLINE and FREE [Installation and Tutorial]](https://i.ytimg.com/vi/G7iLllmx4qc/maxresdefault.jpg)