Learning

GPT-J-6B - Just like GPT-3 but you can actually download the weights and run it at home. No API sign-up required, unlike some other models we could mention, right? Any just like GPT-3, this has 3 super easy example ways you can run this -v ia the EleutherAI website, Google Colab TPU or single GPU on a local system.

Buy art & support a nerd!

https://t.co/kAUP0Vfq48?amp=1

Github: https://github.com/kingoflolz/mesh-transformer-jax

Requirements for this guide to running locally:

* Ubuntu 20.04

* 32 GB RAM and an Nvidia GPU with at least 24 GB VRAM

* Anaconda - https://www.anaconda.com/products/individual

== Python virtual environment (Anaconda) ==

conda create --name mesh-transformer-jax python=3.8

conda activate mesh-transformer-jax

== Download & install ==

git clone https://github.com/kingoflolz/mesh-transformer-jax.git

cd mesh-transformer-jax

sudo apt install zstd

wget https://the-eye.eu/public/AI/G....PT-J-6B/step_383500_

tar -I zstd -xf step_383500_slim.tar.zstd

pip install torch==1.8.1+cu111 torchvision==0.9.1+cu111 torchaudio==0.8.1 -f https://download.pytorch.org/whl/torch_stable.html

pip install jaxlib==0.1.67+cuda111 -f https://storage.googleapis.com..../jax-releases/jax_re

pip install -r requirements.txt # (Edited to use tensorflow-gpu 2.5.0 for no reason)

pip install jax==0.2.12

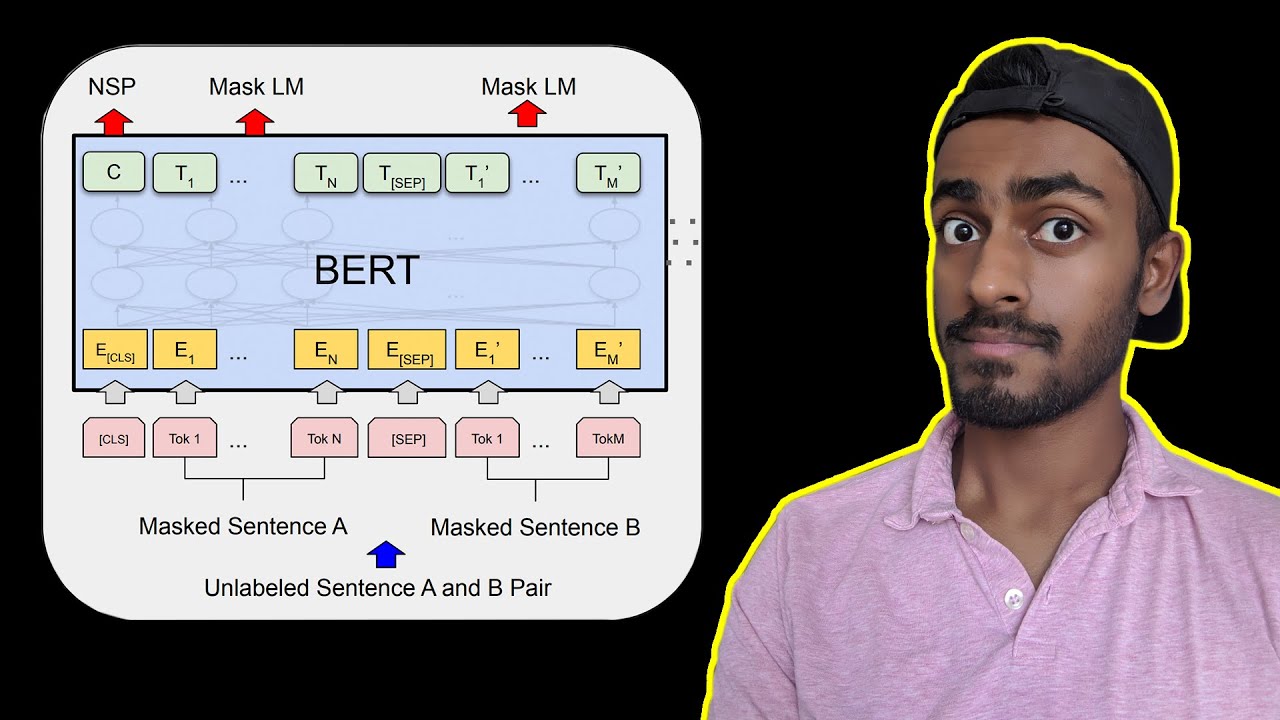

Understand the BERT Transformer in and out.

Follow me on M E D I U M: https://towardsdatascience.com..../likelihood-probabil

Please subscribe to keep me alive: https://www.youtube.com/c/Code....Emporium?sub_confirm

INVESTING: (You can get 3 free stocks setting up a webull account today): https://a.webull.com/8XVa1znjYxio6ESdff

⭐ Coursera Plus: $100 off until September 29th, 2022 for access to 7000+ courses: https://imp.i384100.net/Coursera-Plus

MATH COURSES (7 day free trial)

📕 Mathematics for Machine Learning: https://imp.i384100.net/MathML

📕 Calculus: https://imp.i384100.net/Calculus

📕 Statistics for Data Science: https://imp.i384100.net/AdvancedStatistics

📕 Bayesian Statistics: https://imp.i384100.net/BayesianStatistics

📕 Linear Algebra: https://imp.i384100.net/LinearAlgebra

📕 Probability: https://imp.i384100.net/Probability

OTHER RELATED COURSES (7 day free trial)

📕 ⭐ Deep Learning Specialization: https://imp.i384100.net/Deep-Learning

📕 Python for Everybody: https://imp.i384100.net/python

📕 MLOps Course: https://imp.i384100.net/MLOps

📕 Natural Language Processing (NLP): https://imp.i384100.net/NLP

📕 Machine Learning in Production: https://imp.i384100.net/MLProduction

📕 Data Science Specialization: https://imp.i384100.net/DataScience

📕 Tensorflow: https://imp.i384100.net/Tensorflow

REFERENCES

[1] BERT main paper: https://arxiv.org/pdf/1810.04805.pdf

[1] BERT in google search: https://blog.google/products/s....earch/search-languag

[2] Overview of BERT: https://arxiv.org/pdf/2002.12327v1.pdf

[4] BERT word embeddings explained: https://medium.com/@_init_/why....-bert-has-3-embeddin

[5] More details of BERT in this amazing blog: https://towardsdatascience.com..../bert-explained-stat

[6] Stanford lecture slides on BERT: https://nlp.stanford.edu/semin....ar/details/jdevlin.p

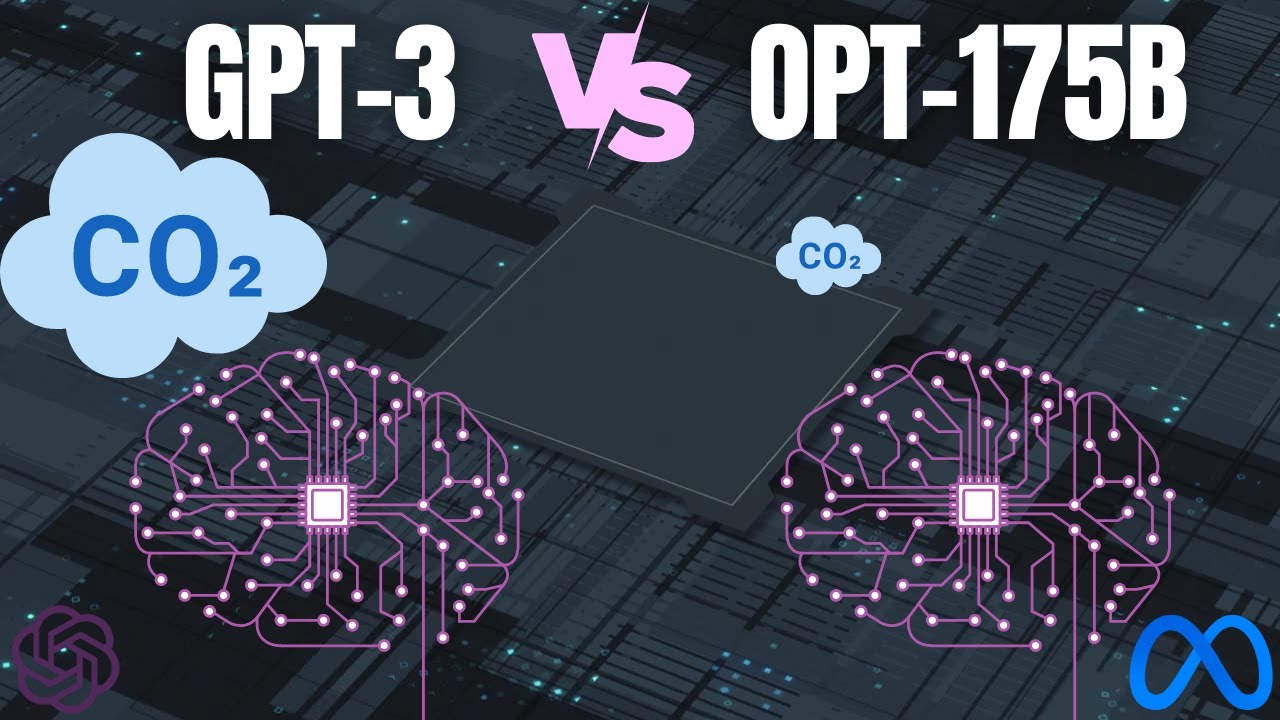

GPT-3 is the biggest language ever model built, and it has been attracting a lot of attention. Rather than argue about whether GPT-3 is overhyped or not, we wanted to dig in to the literature and understand what GPT-3 is (and is not) in light of it’s predecessors and alternative transformer models. In this video we share some of what we’ve learned. What is GPT-3 really good at? What are its constraints? How useful is it for business? Enjoy!

⏰ Time Stamps ⏰

00:40 - Comparison of latest Natural Language Processing Models

01:09 - What is a Transformer Model

01:50 - The Two Types of Transformer Models

02:15 - Difference between bi-directional encoders (BERT) and autoregressive decoders (GPT)

04:40 - GPT-3 is HUGE, does size matter?

05:24 - Presentation of size differences between GPT-3 relative to BERT, RoBERTa, GPT-2, and T5

07:40 - What does GPT do and how is it different than the BERT family?

18:05 - Is GPT-3 a Child Prodigy or a Parlor Trick?

18:44 - Back to the Issue of GPT-3's size

19:30 - Final thoughts on GPT-3 vs BERT

Short segments of AIAW Podcast Episode 003 with Patrick Couch.

How to Convert MBR to GPT During Windows 10/8/7 Installation.

Sometimes, when you are trying to install Windows on your PC, "Windows cannot be installed to this disk. the selected disk has an MBR partition table. On EFI system, Windows can only be installed to GPT disks" error message pops up and interrupts the installing process. Please note the conversion process will format/clean the drive as shown in the tutorial.

In this case, you are required to convert MBR to GPT to get the problem fixed. But how can you make it during Windows installation?

This tutorial will apply for computers, laptops, desktops,and tablets running the Windows 10, Windows 8/8.1, Windows 7 operating systems.Works for all major computer manufactures (Dell, HP, Acer, Asus, Toshiba, Lenovo, Samsung).

Sponsor of the video: http://wandb.me/whats-ai

References:

►Read the full article: https://www.louisbouchard.ai/opt-meta/

►Zhang, Susan et al. “OPT: Open Pre-trained Transformer Language Models.” https://arxiv.org/abs/2205.01068

►My GPT-3's video for large language models: https://youtu.be/gDDnTZchKec

►Meta's post: https://ai.facebook.com/blog/d....emocratizing-access-

►Code: https://github.com/facebookresearch/metaseq

https://github.com/facebookresearch/metaseq/tree/main/projects/OPT

►My Newsletter (A new AI application explained weekly to your emails!): https://www.louisbouchard.ai/newsletter/

Join Our Discord channel, Learn AI Together:

►https://discord.gg/learnaitogether

Chapters:

0:00 Hey! Tap the Thumbs Up button and Subscribe. You'll learn a lot of cool stuff, I promise.

1:34 Sponsor: w&b

2:28 OPT-175B

#GPT3 #OPT #Meta

Transformers are the rage nowadays, but how do they work? This video demystifies the novel neural network architecture with step by step explanation and illustrations on how transformers work.

CORRECTIONS:

The sine and cosine functions are actually applied to the embedding dimensions and time steps!

Audo Studio | Automagically Make Audio Recordings Studio Quality

https://www.audostudio.com/

Magic Mic | Join waitlist and get it FREE forever when launched! 🎙️

https://magicmic.ai/

Audo AI | Audio Background Noise Removal Developer API and SDK

https://audo.ai/

Subscribe to my email newsletter for updated Content. No spam 🙅♂️ only gold 🥇.

https://bit.ly/320hUdx

Discord Server: Join a community of A.I. Hackers

https://discord.gg/9wSTT4F

Hugging Face Write with Transformers

https://transformer.huggingface.co/

Learn how to get started with Hugging Face and the Transformers Library in 15 minutes! Learn all about Pipelines, Models, Tokenizers, PyTorch & TensorFlow integration, and more!

Get your Free Token for AssemblyAI Speech-To-Text API 👇https://www.assemblyai.com/?utm_source=youtube&utm_medium=referral&utm_campaign=yt_pat_26

Hugging Face Tutorial

Hugging Face Crash Course

Sentiment Analysis, Text Generation, Text Classification

Resources:

Website: https://huggingface.co

Course: https://huggingface.co/course

Finetune: https://huggingface.co/docs/transformers/training

▬▬▬▬▬▬▬▬▬▬▬▬ CONNECT ▬▬▬▬▬▬▬▬▬▬▬▬

🖥️ Website: https://www.assemblyai.com

🐦 Twitter: https://twitter.com/AssemblyAI

🦾 Discord: https://discord.gg/Cd8MyVJAXd

▶️ Subscribe: https://www.youtube.com/c/Asse....mblyAI?sub_confirmat

🔥 We're hiring! Check our open roles: https://www.assemblyai.com/careers

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

Timestamps:

00:00 Intro

00:40 Installation

01:02 Pipeline

04:37 Tokenizer & Model

08:32 PyTorch / TensorFlow

11:07 Save / Load

11:35 Model Hub

13:25 Finetune

HuggingFace Tutorial

HuggingFace Crash Course

#MachineLearning #DeepLearning #HuggingFace

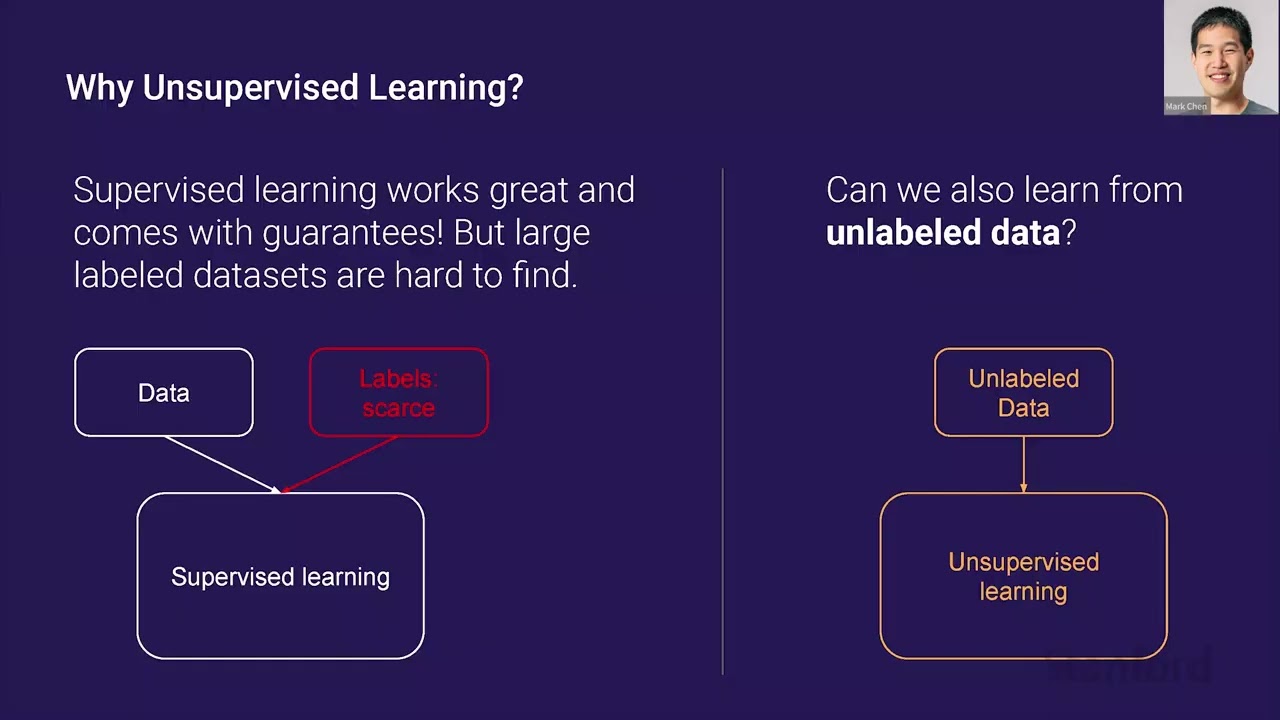

While the Transformer architecture is used in a variety of applications across a number of domains, it first found success in natural language. Today, Transformers remain the de facto model in language - they achieve state-of-the-art results on most natural language benchmarks, and can generate text coherent enough to deceive human readers. In this talk, we will review recent progress in neural language modeling, discuss the link between generating text and solving downstream tasks, and explore how this led to the development of GPT models at OpenAI. Next, we’ll see how the same approach can be used to produce generative models and strong representations in other domains like images, text-to-image, and code. Finally, we will dive into the recently released code generating model, Codex, and examine this particularly interesting domain of study.

Mark Chen is a research scientist at OpenAI, where he manages the Algorithms Team. His research interests include generative modeling and representation learning, especially in the image and multimodal domains. Prior to OpenAI, Mark worked in high frequency trading and graduated from MIT. Mark is also a coach for the USA Computing Olympiad team.

A full list of guest lectures can be found here: https://www.youtube.com/playli....st?list=PLoROMvodv4r

0:00 Introduction

0:08 3-Gram Model (Shannon 1951)

0:27 Recurrent Neural Nets (Sutskever et al 2011)

1:12 Big LSTM (Jozefowicz et al 2016)

1:52 Transformer (Llu and Saleh et al 2018)

2:33 GPT-2: Big Transformer (Radford et al 2019)

3:38 GPT-3: Very Big Transformer (Brown et al 2019)

5:12 GPT-3: Can Humans Detect Generated News Articles?

9:09 Why Unsupervised Learning?

10:38 Is there a Big Trove of Unlabeled Data?

11:11 Why Use Autoregressive Generative Models for Unsupervised Learnin

13:00 Unsupervised Sentiment Neuron (Radford et al 2017)

14:11 Radford et al 2018)

15:21 Zero-Shot Reading Comprehension

16:44 GPT-2: Zero-Shot Translation

18:15 Language Model Metalearning

19:23 GPT-3: Few Shot Arithmetic

20:14 GPT-3: Few Shot Word Unscrambling

20:36 GPT-3: General Few Shot Learning

23:42 IGPT (Chen et al 2020): Can we apply GPT to images?

25:31 IGPT: Completions

26:24 IGPT: Feature Learning

32:20 Isn't Code Just Another Modality?

33:33 The HumanEval Dataset

36:00 The Pass @ K Metric

36:59 Codex: Training Details

38:03 An Easy Human Eval Problem (pass@1 -0.9)

38:36 A Medium HumanEval Problem (pass@1 -0.17)

39:00 A Hard HumanEval Problem (pass@1 -0.005)

41:26 Calibrating Sampling Temperature for Pass@k

42:19 The Unreasonable Effectiveness of Sampling

43:17 Can We Approximate Sampling Against an Oracle?

45:52 Main Figure

46:53 Limitations

47:38 Conclusion

48:19 Acknowledgements

#gpt3

This week we’re looking into transformers. Transformers were introduced a couple of years ago with the paper Attention is All You Need by Google Researchers. Since its introduction transformers has been widely adopted in the industry.

Get your Free Token for AssemblyAI Speech-To-Text API 👇

https://www.assemblyai.com/?utm_source=youtube&utm_medium=referral&utm_campaign=yt_mis_8&utm_id=yt_mis_8

Models like BERT, GPT-3 made groundbreaking improvements in the world of NLP using transformers. Since then model libraries like hugging face made it possible for everyone to use transformer based models in their projects. But what are transformers and how do they work? How are they different from other deep learning models like RNNs, LSTMs? Why are they better?

In this video, we learn about it all!

Some of my favorite resources on Transformers:

The original paper - https://arxiv.org/pdf/1706.03762.pdf

If you’re interested in following the original paper with the code - http://nlp.seas.harvard.edu/20....18/04/03/attention.h

The Illustrated Transformer – https://jalammar.github.io/ill....ustrated-transformer

Blog about positional encodings - https://kazemnejad.com/blog/tr....ansformer_architectu

About attention - Visualizing A Neural Machine Translation Model - https://jalammar.github.io/vis....ualizing-neural-mach

Layer normalization - https://arxiv.org/abs/1607.06450

Some images used in this video are from:

https://colah.github.io/posts/....2015-08-Understandin

https://jalammar.github.io/vis....ualizing-neural-mach

https://medium.com/nanonets/ho....w-to-easily-build-a-

https://medium.com/swlh/elegan....t-intuitions-behind-

Connect and follow the speaker:

Abhilash Majumder - https://linktr.ee/abhilashmajumder

A blog used in the video:

https://jalammar.github.io/illustrated-gpt2/

Join and follow us on social media to get notified about our online and offline activities,

Website: https://colearninglounge.com

LinkedIn: https://www.linkedin.com/company/colearninglounge

Facebook: https://www.facebook.com/groups/colearninglounge

Github: https://github.com/colearningl....ounge/co-learning-lo

Telegram: https://t.me/ColearningLounge_AIRoom

Instagram: https://www.instagram.com/colearninglounge

Twitter: https://twitter.com/ColearninLounge

Medium: https://medium.com/co-learning-lounge

Hi friends,

This is the first video in a series on implementing a GPT-style model in Tensorflow. I scoured the web for days to find a video tutorial on Tensorflow and GPT-style transformer models to no avail. So I decided to make my own after reading through a dozen or so blog posts and PyTorch implementations.

Notable inspiration:

- https://colab.research.google.....com/github/d2l-ai/d2

- Apoory Nandan's Text Generation with Miniature GPT

- and many, many others.

I'm still learning this myself, so any questions or comments are valued. Thanks for your time.

colab linkhttps://colab.research.google.....com/drive/1o_-QIR8yV

⭐ Kite is a free AI-powered coding assistant that will help you code faster and smarter. The Kite plugin integrates with all the top editors and IDEs to give you smart completions and documentation while you’re typing. I've been using Kite for a few months and I love it! https://www.kite.com/get-kite/?utm_medium=referral&utm_source=youtube&utm_campaign=krishnaik&utm_content=description-only

All Playlist In My channel

Interview Playlist: https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Complete DL Playlist: https://www.youtube.com/watch?v=9jA0KjS7V_c&list=PLZoTAELRMXVPGU70ZGsckrMdr0FteeRUi

Julia Playlist: https://www.youtube.com/watch?v=Bxp1YFA6M4s&list=PLZoTAELRMXVPJwtjTo2Y6LkuuYK0FT4Q-

Complete ML Playlist :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Complete NLP Playlist:https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Docker End To End Implementation: https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Live stream Playlist: https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Machine Learning Pipelines: https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Pytorch Playlist: https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Feature Engineering :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Live Projects :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Kaggle competition :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Mongodb with Python :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

MySQL With Python :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Deployment Architectures:https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Amazon sagemaker :https://www.youtube.com/playli....st?list=PLZoTAELRMXV

Please donate if you want to support the channel through GPay UPID,

Gpay: krishnaik06@okicici

Telegram link: https://t.me/joinchat/N77M7xRvYUd403DgfE4TWw

Please join as a member in my channel to get additional benefits like materials in Data Science, live streaming for Members and many more

https://www.youtube.com/channe....l/UCNU_lfiiWBdtULKOw

Connect with me here:

Twitter: https://twitter.com/Krishnaik06

Facebook: https://www.facebook.com/krishnaik06

instagram: https://www.instagram.com/krishnaik06

Transformers

BERT

GPT

GPT-2

GPT-3

Attention is all you need

Deep Learning

NLP

Writing blog posts and emails can be tough at the best of times.

TBH, some days just writing anything can be a struggle

I mean, right now, I'm struggling to write this description 😅

There's got to be a better way right?

Well, there is. Using the amazing AI power of GPT2 and Python you can generate your own blog posts using a technique called Text Generation. This can be extended out to a whole heap of different use cases, it could be used to write emails, poems, code. You name it, you could probably do it.

In this case, we're focused on blog posts though. You'll be able to pass through a simple sentence and have a whole chunk of text output that you can then use on your blog!

In this video, you'll learn how to:

1. Setting up Hugging Face Transformers to use GPT2-Large

2. Loading the GPT2 Model and Tokenizer

3. Encoding text into token format

4. Generating text using the GPT2 Model

5. Decoding output to generate blog posts

Get the code: https://github.com/nicknochnac....k/Generating-Blog-Po

Chapters:

0:00 - Start

3:34 - Installing Hugging Face Transformers with Python

4:03 - Importing GPT2

5:23 - Loading the GPT2-Large Model and Tokenizer

8:39 - Tokenizing Sentences for AI Text Generation

10:57 - Generating Text using GPT2-Large

11:50 - Decoding Generated Text

14:13 - Outputting Results to .txt files

16:11 - Generating Longer Blog Posts

Oh, and don't forget to connect with me!

LinkedIn: https://www.linkedin.com/in/nicholasrenotte

Facebook: https://www.facebook.com/nickrenotte/

GitHub: https://github.com/nicknochnack

Patreon: https://www.patreon.com/nicholasrenotte

Join the Discussion on Discord: https://discord.gg/mtTTwYkB29

Happy coding!

Nick

P.s. Let me know how you go and drop a comment if you need a hand!