Latest videos

Read about this in more detail in my latest blog post: https://www.builder.io/blog/train-ai

#ai #developer #javascript #react

🚀 Small Language Models (SLMs): The Future of AI? 🤖

Most people think AI—like ChatGPT—needs huge servers and an internet connection. While that’s true for Large Language Models (LLMs), AI doesn’t have to be massive!

Introducing Small Language Models (SLMs)—AI that’s lightweight, efficient, and runs entirely offline. 📱💡

🔍 What You’ll Learn in This Video:

✅ SLMs vs. LLMs – What’s the difference?

✅ How SLMs work – Train them on your data for specific tasks.

✅ Real-world examples – AI for customer support, healthcare, and education.

✅ Why SLMs are the future – Privacy-friendly, low-energy, and cost-effective!

🌎 Why This Matters:

Unlike LLMs that require cloud computing, SLMs can run directly on your phone, laptop, or even a Raspberry Pi! No internet needed, no privacy concerns, and perfect for businesses, remote areas, and industries with strict data security requirements.

💡 SLMs Are Changing AI Forever!

• Instant AI-powered customer support 🏢

• Medical assistance in remote areas 🏥

• Personalized education tools for students 📚

• Energy-efficient AI for sustainability 🌱

While much of the world is focused on large language models, our Salesforce AI Research teams are looking at smaller models that offer unique advantages in speed, cost, and accessibility. In this episode, learn what Small Language Models are, how they work and how they can be used for task-specific situations like personal agents on wearable devices like smart watches and robotics.

Learn more about Agentforce: https://www.salesforce.com/agentforce/

Learn more about AI Agents: https://www.salesforce.com/agentforce/ai-agents/

The AI Research Lab - Explained gives you a sneak peak of cutting-edge AI techniques developed by Salesforce’s very own AI Research group. Along the way, we’ll demystify complex concepts and share real-world insights—all from the forefront of research.

#Salesforce #Agentforce

Subscribe to Salesforce’s YouTube Channel: http://bit.ly/SalesforceSubscribe

Learn more about Salesforce: https://www.salesforce.com

Facebook: https://www.facebook.com/salesforce

Twitter: https://www.twitter.com/salesforce

Instagram: https://www.instagram.com/salesforce

LinkedIn: https://www.linkedin.com/company/salesforce

About Salesforce:

Improve customer relationships with Salesforce, the #1 AI CRM where humans with agents drive customer success together. Our integrated platform pairs your unified customer data with trusted agents that can assist, take action autonomously, and hand off seamlessly to your employees in sales, service, marketing, commerce, and more.

Disclaimer: This video is for demonstration purposes only. All account data depicted is fictional and does not contain personally identifiable information (PII). This content is intended solely to illustrate product functionality in a simulated environment.

How to build your own Tiny Language Model from scratch - Luca Gilli - PyCon Italia 2025

Elevator Pitch:

Join us to discover Tiny Language Models: ultra-compact, resource-efficient AI tools that deliver impressive results. In this session, you’ll learn how to create your own model, from data preparation to training. These models combine innovation with efficiency, making AI more accessible than ever.

Learn more: https://2025.pycon.it/event/ho....w-to-build-your-own-

#MachineLearning #DeepLearning #NaturalLanguageProcessing

In this video, learn how to build your own Small Language Model (SLM) from scratch using Python, PyTorch, and Hugging Face datasets. We’ll walk through every step — from data collection and preprocessing to tokenization, transformer architecture, and model training.

You’ll discover how a compact 10–15 million parameter model can still generate coherent and meaningful text, using concepts inspired by GPT models. This tutorial simplifies complex AI topics like embedding layers, attention mechanisms, loss computation, and next-token prediction — all explained clearly with working code examples.

🔹 Topics Covered:

What are Small Language Models (SLMs)?

Using the TinyStories dataset from Hugging Face

Tokenization with OpenAI’s tiktoken library

Creating .bin training files for efficient processing

Building a Transformer architecture step-by-step

Implementing GPT-like attention blocks

Training configuration and hyperparameter tuning

Generating text from your trained SLM

🎯 Perfect for AI researchers, ML engineers, and enthusiasts who want to understand how GPT-style models actually work under the hood — without requiring massive GPUs or billion-parameter setups.

#SmallLanguageModel #BuildLLM #GPT2Tutorial #TransformerModel #PyTorch #AIML #DeepLearning #NLP #HuggingFace #TrainYourOwnGPT #SLM #LanguageModel #MachineLearning #ArtificialIntelligence #NanoGPT #AIProjects #PythonCoding #AICoding #OpenSourceAI #generativeai

Dr. Raj Dandekar (MIT PhD) conducted a 7-hour small language model workshop. This is part 3 of that workshop.

In this, we cover the following four aspects:

1. 00:00 Recap of the SLM architecture

2. 18:30 Calculating the SLM Loss

3. 58:27 SLM Pre-training loop: Theory + Coding

4. 01:23:00 SLM Inference

If you want to get access to the detailed lecture notes, register here: https://vizuara.ai/courses/bui....ld-slm-from-scratch-

Dr. Raj Dandekar (MIT PhD) conducted a 7-hour small language model workshop. This is part 1 of that workshop.

In this, we cover the following four aspects:

1. 00:00 Introduction to SLM

2. 40:15 Dataset loading

3. 53:36 Tokenization

4. 01:24:42 Creating input-output pairs

If you want to get access to the detailed lecture notes, register here: https://vizuara.ai/courses/bui....ld-slm-from-scratch-

In this video, Dr. Raj Dandekar (MIT PhD) teaches you how to build a production level SLM entirely from scratch.

You will learn the following:

(1) Creating the dataset

(2) Tokenizing the dataset

(3) Creating input-target pairs

(4) Creating the entire SLM architecture

(5) Setup the SLM for pre-training

(6) Pre-training the SLM

(7) Inference

Google Colab Notebook: https://colab.research.google.....com/drive/1k4G3G5MxY

A video about decision trees, and how to train them on a simple example.

Accompanying blog post: https://medium.com/@luis.serra....no/splitting-data-by

For a code implementation, check out this repo:

https://github.com/luisguiserr....ano/manning/tree/mas

Helper videos:

- Gini index: https://www.youtube.com/watch?v=u4IxOk2ijSs

- Entropy and information gain: https://www.youtube.com/watch?v=9r7FIXEAGvs

- Machine learning error and metrics: https://www.youtube.com/watch?v=aDW44NPhNw0

Grokking Machine Learning book:

www.manning.com/books/grokking-machine-learning

40% discount code: serranoyt

For a code implementation, check out this repo:

https://github.com/luisguiserr....ano/manning/tree/mas

Announcement: New Book by Luis Serrano! Grokking Machine Learning. bit.ly/grokkingML

40% discount code: serranoyt

An introduction to support vector machines (SVMs) that requires very little math (no calculus or linear algebra), only a visual mind.

This is the third of a series of three videos.

- Linear Regression: https://www.youtube.com/watch?v=wYPUhge9w5c

- Logistic Regression: https://www.youtube.com/watch?v=jbluHIgBmBo

0:00 Introduction

1:42 Classification goal: split data

3:14 Perceptron algorithm

6:00 Split data - separate lines

7:05 How to separate lines?

12:01 Expanding rate

18:19 Perceptron Error

19:26 SVM Classification Error

20:34 Margin Error

25:13 Challenge - Gradient Descent

27:25 Which line is better?

28:24 The C parameter

30:16 Series of 3 videos

30:30 Thank you!

CORRECTION: At 10:56 we shouldn't divide by 4 to get the covariance, we should divide by 1+1+1+1/3, which is 10/3. That means the covariances are the following:

Var(x) = 1.056

Var(y) = 0.864

Cov(x,y) = 0.768

(Thank you Shivkumar Pippal!)

Mean, variance, covariance, and the covariance matrix for a dataset and a weighted dataset.

Announcement: Book by Luis Serrano! Grokking Machine Learning. bit.ly/grokkingML

40% discount code: serranoyt

0:00 Introduction

0:09 The covariance matrix

2:22 Average

3:23 X-variance

5:06 Problem: Same variances

7:59 Formulas

10:30 Center points

For a code implementation, check out these repos:

https://github.com/luisguiserr....ano/manning/tree/mas

https://github.com/luisguiserr....ano/manning/tree/mas

Announcement: New Book by Luis Serrano! Grokking Machine Learning. bit.ly/grokkingML

40% discount code: serranoyt

An introduction to logistic regression and the perceptron algorithm that requires very little math (no calculus or linear algebra), only a visual mind.

0:00 Introduction

0:08 Series of 3 videos

0:41 E-mail spam classifier

7:19 Classification goal: split data

11:36 How to move a line

12:21 Rotating and translating

18:47 Perceptron Trick

23:20 Correctly and incorrectly classified points

24:20 Positive and negative regions

27:18 Perceptron Error

29:40 Gradient Descent

34:36 A friendly introduction to deep learning and neural networks

37:48 Activation function (sigmoid)

38:31 Log-Loss Error

41:37 Perceptron Algorithm

42:45 Logistic regression algorithm

44:48 Thank you!

serranosmusicacademy.com

Covariance matrix video: https://youtu.be/WBlnwvjfMtQ

Clustering video: https://youtu.be/QXOkPvFM6NU

A friendly description of Gaussian mixture models, a very useful soft clustering method.

Announcement: New Book by Luis Serrano! Grokking Machine Learning. bit.ly/grokkingML

40% discount code: serranoyt

0:00 Introduction

0:13 Clustering applications

1:56 Hard clustering - soft clustering

3:36 Step 1: Colouring points

6:10 Step 2: Fitting a Gaussian

10:33 Gaussian Mixture Models (GMM)

Correction: At 30:42 I write "X = Y". They're not equal, what I meant to say is "X and Y are identically distributed".

The variance is a measure of how spread out a distribution is. In order to estimate the variance, one takes a sample of n points from the distribution, and calculate the average square deviation from the mean.

However, this doesn't give a good estimate of the variance of the distribution. The best estimate, however, is obtained when dividing by n-1 instead of n.

WHY!?!?!?!?!?!?!?

In this video, we dig deeper into why the variance calculation should be divided by n-1 instead of by n. For this, we use an alternate definition of the variance, which doesn't use the mean in its calculation.

*[0:00] Introduction and Bessel's Correction*

- Introducing Bessel's Correction and why we divide by \( n-1 \) instead of \( n \) to estimate variance.

*[0:12] Introduction to Variance Calculation*

- Explaining the premise of calculating variance and introducing the concept of estimating variance using a sample instead of the entire population.

*[1:01] Definition of Variance*

- Defining variance as a measure of how much values deviate from the mean and outlining the basic steps of variance calculation.

*[1:52] Introduction to Bessel's Correction*

- Discussing why we divide by \( n-1 \) when calculating variance and introducing Bessel's Correction.

*[2:35] Challenges of Bessel's Correction*

- Sharing personal challenges in understanding the rationale behind Bessel's Correction and discussing my research process on the topic.

*[3:20] Alternative Definition of Variance*

- Presenting an alternative definition of variance to aid in understanding Bessel's Correction and expressing curiosity about its presence in the literature.

*[4:45] Quick Recap of Mean and Variance*

- Briefly revisiting the concepts of mean and variance, demonstrating how they are calculated with examples, and explaining how variance reflects different distributions.

*[7:05] Sample Mean and Variance Estimation*

- Explaining the challenges of estimating the mean and variance of a distribution using a sample and discussing why sample variance is not a good estimate.

*[8:49] Bessel's Correction and Why \( n-1 \) is Used*

- Explaining how Bessel's Correction provides a better estimate of variance and why we divide by \( n-1 \) instead of \( n \). Emphasizing the importance of making a correct variance estimate.

*[10:51] Why Better Estimation Matters?*

- Discussing why the original estimate is poor and why making a better estimate is crucial. Explaining the significance of sample mean as a good estimate.

*[13:02] Issues with Variance Estimation*

- Illustrating the problems with variance estimation and demonstrating with examples why using the correct mean is essential for accurate estimates. Explaining the accuracy of estimates made using \( n-1 \).

*[15:04] Introduction to Correcting the Estimate*

- Discussing the underestimated variance and the need for correction in estimation.

*[15:57] Adjusting the Variance Formula*

- Explaining the adjustment in the variance formula by changing the denominator from \( n \) to \( n - 1 \).

*[16:22] Calculation Illustration*

- Demonstrating the calculation process of variance with the adjusted formula using examples.

*[16:57] Better Estimate with Bessel's Correction*

- Discussing how the corrected estimate provides a more accurate variance estimation.

*[18:24] New Method for Variance Calculation*

- Introducing a new method for calculating variance without explicitly calculating the mean.

*[20:06] Understanding the Relation between Variance and Variance*

- Explaining the relationship between variance and variance, and how they are related mathematically.

*[21:52] Demonstrating a Bad Calculation*

- Illustrating a flawed method for calculating variance and explaining the need for correction.

*[23:37] The Role of Bessel's Correction*

- Explaining why removing unnecessary zeros in variance calculation leads to better estimates, equivalent to Bessel's Correction.

*[25:08] Summary of Estimation Methods*

- Summarizing the difference between the flawed and corrected estimation methods for variance.

*[26:02] Importance of Bessel's Correction*

- Emphasizing the significance of Bessel's Correction for accurate variance estimation, especially with smaller sample sizes.

*[30:19] Mathematical Proof of Variance Relationship*

- Providing two proofs of the relationship between variance and variance, highlighting their equivalence.

*[35:24] Acknowledgments and Conclusion*

Thanks @mkan543 for the summary!

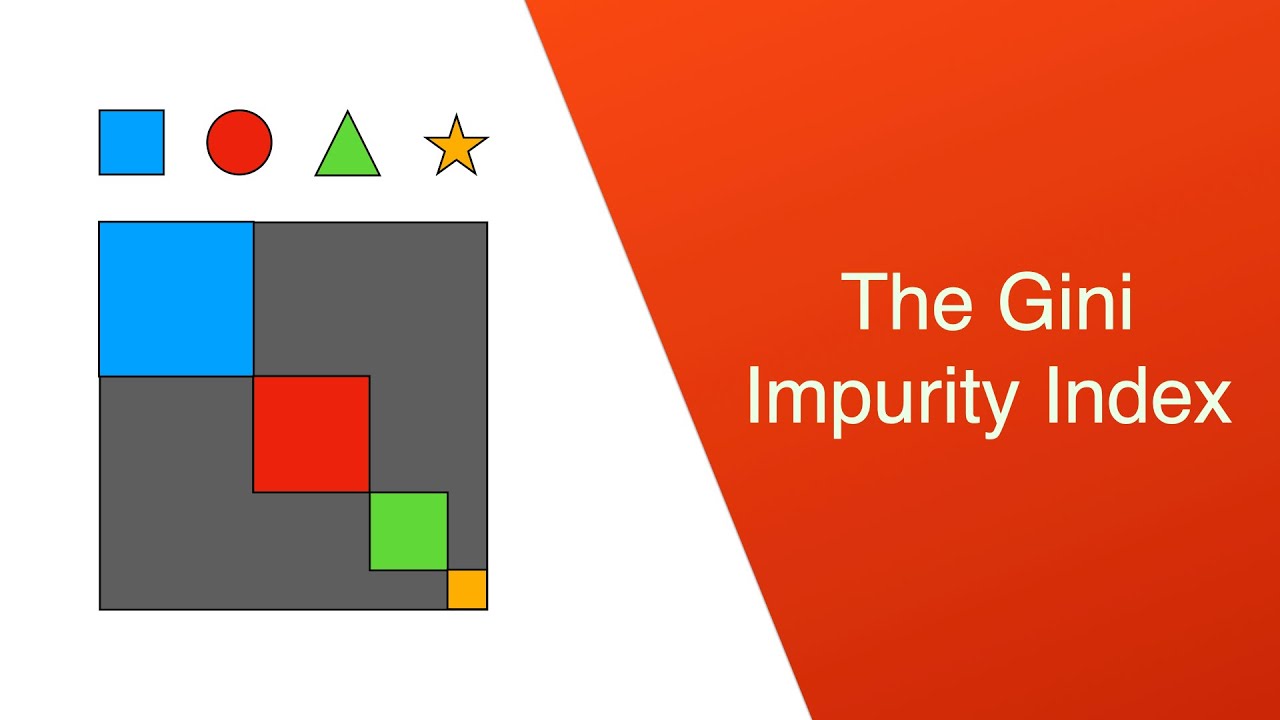

The Gini Impurity Index is a measure of the diversity in a dataset. In this short video you'll learn a very simple way to calculate it using probabilities.

Announcement: Book by Luis Serrano! Grokking Machine Learning. bit.ly/grokkingML

40% discount code: serranoyt