Latest videos

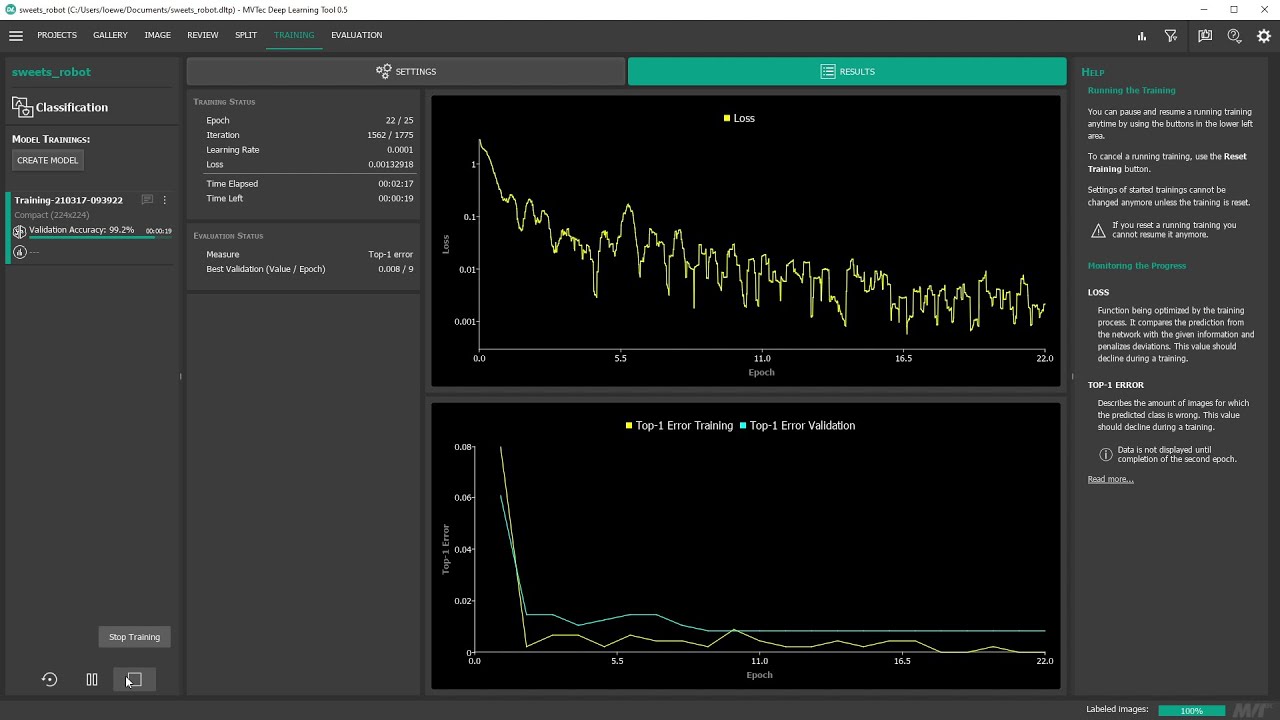

With the MVTec Deep Learning Tool it’s possible to train a deep-learning-based classification model from scratch. First, we import images of your application – using the folder structure, the Deep Learning Tool can assign labels automatically. We can then easily review our data by filtering the images according to these labels. After that, we train the model using transfer learning and pretrained networks provided by MVTec. Lastly, we can review the trained model in detail, using a confusion matrix, heatmaps and more. In this video, version 0.5 of the Deep Learning Tool is used. The exported trained model including the associated dictionary, which contains the preprocessing parameters, can be imported into HDevelop and MERLIC and then used for inference.

In this video, version 0.5 of the Deep Learning Tool is used.

Get more information at https://www.mvtec.com/products/deep-learning-tool/

Join The Sound Of AI Slack community:

https://www.youtube.com/redirect?event=video_description&q=https%3A%2F%2Fjoin.slack.com%2Ft%2Fthesoundofai%2Fshared_invite%2Fzt-f71npumr-anli6W4QCuZ8UCj2gLoBkw&redir_token=QUFFLUhqbExxdFJ1S29mMVBKSFd0REZuUWFjUVpzQTFFUXxBQ3Jtc0ttWXZfTXpkRUlOdVNwU0poT1pmMkdkUzJFMkF4N19HSDVzbm5qUE43eV85bmwyNkFIdmlfbjVRTElhaUJIWmhfMDhJb1hQd1RaU1FmczNBWVA0ZjR6SXhiQ1JFV3owcURxc19KUmdlQU5KTzlmM0dxZw%3D%3D&v=KxRmbtJWUzI

In this video, you can get an understanding of the sound generation task, and learn to classify different types of sound generation systems.

I discuss the approaches and Deep Learning models used to generate sound. I also outline the challenges encountered with different methods, and discuss the features used to train generative sound systems.

Slides:

https://github.com/musikalkemi....st/generating-sound-

Interested in hiring me as a consultant/freelancer?

https://valeriovelardo.com/

Follow Valerio on Facebook:

https://www.facebook.com/TheSoundOfAI

Connect with Valerio on Linkedin:

https://www.linkedin.com/in/valeriovelardo

Follow Valerio on Twitter:

https://twitter.com/musikalkemist

===============================

Content:

0:00 Intro

0:33 Defining the sound generation task

1:17 Classification of sound generation systems

2:14 Types of generated sounds

3:41 Sound representations

4:07 Generation from raw audio

7:40 Challenges of raw audio generation

10:21 Generation from spectrograms

16:12 Advantages of generation from spectrograms

18:07 Challenges of generation from spectrograms

20:26 Can we generate sound with MFCCs?

21:26 DL architectures for sound generation

22:13 Inputs for generation

24:03 Details about the sound generative system we'll build

24:44 What's next?

===============================

Mentioned papers:

Wavenet: A Generative Model for Raw Audio:

https://arxiv.org/pdf/1609.03499.pdf

Jukebox: A Generative Model for Music

https://arxiv.org/pdf/2005.00341

DrumGAN: Synthesis of Drum Sounds with Timbral Feature Conditioning Using Generative Adversarial Networks

https://arxiv.org/pdf/2008.12073

Melnet: A generative model for audio in the frequency domain

https://arxiv.org/pdf/1906.01083.pdf

Subscribe: http://bit.ly/subscribeToSmitha

Tesla has currently pioneered a major shift in the auto industry towards Self-driving cars. Have you ever wondered how it achieves this? Let's discuss the basic essentials of Self-Driving capabilities in a Tesla Car:

1. Computer Vision:

A broad field in Artificial Intelligence focusing on enabling computers to see. Tesla cars primarily make use of 2 Computer Vision Applications that make use of Deep Learning; Image Localization and Image Classification. Image Localization allows Tesla cars to position where an object is while Image classification allows the car to identify the object. These two come hand in hand to allow Tesla cars to "see".

2. Decision Making:

Besides using Deep Learning to make more efficient models to enable Tesla cars to "see", it has to carry out these resource-intensive tasks in real time. So the chips in Tesla cars have to be powerful enough to handle all the computations in Self-Driving cars. Tesla recently unveiled their own chips in 2019, which they claim is 21x more powerful than Nvidia chips.

Socials:

LinkedIn: https://www.linkedin.com/in/smithakolan/

Instagram: https://www.instagram.com/smithacodes/

Graph machine learning has become very popular in recent years in the machine learning and engineering communities. In this video, we explore the math behind some of the most popular graph neural network algorithms!

Support the channel by liking, commenting, subscribing, and recommend this video to your friends, coworkers, or colleagues if you think they'll find this video valuable!

Other Videos in this Series

Why use graphs for machine learning? https://youtu.be/mu1Inz3ltlo

Intro to graph neural networks https://youtu.be/cka4Fa4TTI4

Spatio-Temporal Graph Neural Networks https://youtu.be/RRMU8kJH60Q

My Links:

Youtube: https://www.youtube.com/channe....l/UCpXbaIslF2ZKeJ6rW

Twitter: https://twitter.com/jhanytime

Reddit: https://old.reddit.com/user/jhanytime/

Other Links:

Slides: https://drive.google.com/file/....d/1C9rRQ46kfFVQUNrg8

Patreon: https://www.patreon.com/mlst

Discord: https://discord.gg/ESrGqhf5CB

"Symmetry, as wide or narrow as you may define its meaning, is one idea by which man through the ages has tried to comprehend and create order, beauty, and perfection." and that was a quote from Hermann Weyl, a German mathematician who was born in the late 19th century.

The last decade has witnessed an experimental revolution in data science and machine learning, epitomised by deep learning methods. Many high-dimensional learning tasks previously thought to be beyond reach -- such as computer vision, playing Go, or protein folding -- are in fact tractable given enough computational horsepower. Remarkably, the essence of deep learning is built from two simple algorithmic principles: first, the notion of representation or feature learning and second, learning by local gradient-descent type methods, typically implemented as backpropagation.

While learning generic functions in high dimensions is a cursed estimation problem, many tasks are not uniform and have strong repeating patterns as a result of the low-dimensionality and structure of the physical world.

Geometric Deep Learning unifies a broad class of ML problems from the perspectives of symmetry and invariance. These principles not only underlie the breakthrough performance of convolutional neural networks and the recent success of graph neural networks but also provide a principled way to construct new types of problem-specific inductive biases.

This week we spoke with Professor Michael Bronstein (head of graph ML at Twitter) and Dr.

Petar Veličković (Senior Research Scientist at DeepMind), and Dr. Taco Cohen and Prof. Joan Bruna about their new proto-book Geometric Deep Learning: Grids, Groups, Graphs, Geodesics, and Gauges.

Enjoy the show!

Geometric Deep Learning: Grids, Groups, Graphs, Geodesics, and Gauges

https://arxiv.org/abs/2104.13478

[00:00:00] Tim Intro

[00:01:55] Fabian Fuchs article

[00:04:05] High dimensional learning and curse

[00:05:33] Inductive priors

[00:07:55] The proto book

[00:09:37] The domains of geometric deep learning

[00:10:03] Symmetries

[00:12:03] The blueprint

[00:13:30] NNs don't deal with network structure (TedX)

[00:14:26] Penrose - standing edition

[00:15:29] Past decade revolution (ICLR)

[00:16:34] Talking about the blueprint

[00:17:11] Interpolated nature of DL / intelligence

[00:21:29] Going tack to Euclid

[00:22:42] Erlangen program

[00:24:56] “How is geometric deep learning going to have an impact”

[00:26:36] Introduce Michael and Petar

[00:28:35] Petar Intro

[00:32:52] Algorithmic reasoning

[00:36:16] Thinking fast and slow (Petar)

[00:38:12] Taco Intro

[00:46:52] Deep learning is the craze now (Petar)

[00:48:38] On convolutions (Taco)

[00:53:17] Joan Bruna's voyage into geometric deep learning

[00:56:51] What is your most passionately held belief about machine learning? (Bronstein)

[00:57:57] Is the function approximation theorem still useful? (Bruna)

[01:11:52] Could an NN learn a sorting algorithm efficiently (Bruna)

[01:17:08] Curse of dimensionality / manifold hypothesis (Bronstein)

[01:25:17] Will we ever understand approximation of deep neural networks (Bruna)

[01:29:01] Can NNs extrapolate outside of the training data? (Bruna)

[01:31:21] What areas of math are needed for geometric deep learning? (Bruna)

[01:32:18] Graphs are really useful for representing most natural data (Petar)

[01:35:09] What was your biggest aha moment early (Bronstein)

[01:39:04] What gets you most excited? (Bronstein)

[01:39:46] Main show kick off + Conservation laws

[01:49:10] Graphs are king

[01:52:44] Vector spaces vs discrete

[02:00:08] Does language have a geometry? Which domains can geometry not be applied? +Category theory

[02:04:21] Abstract categories in language from graph learning

[02:07:10] Reasoning and extrapolation in knowledge graphs

[02:15:36] Transformers are graph neural networks?

[02:21:31] Tim never liked positional embeddings

[02:24:13] Is the case for invariance overblown? Could they actually be harmful?

[02:31:24] Why is geometry a good prior?

[02:34:28] Augmentations vs architecture and on learning approximate invariance

[02:37:04] Data augmentation vs symmetries (Taco)

[02:40:37] Could symmetries be harmful (Taco)

[02:47:43] Discovering group structure (from Yannic)

[02:49:36] Are fractals a good analogy for physical reality?

[02:52:50] Is physical reality high dimensional or not?

[02:54:30] Heuristics which deal with permutation blowups in GNNs

[02:59:46] Practical blueprint of building a geometric network architecture

[03:01:50] Symmetry discovering procedures

[03:04:05] How could real world data scientists benefit from geometric DL?

[03:07:17] Most important problem to solve in message passing in GNNs

[03:09:09] Better RL sample efficiency as a result of geometric DL (XLVIN paper)

[03:14:02] Geometric DL helping latent graph learning

[03:17:07] On intelligence

[03:23:52] Convolutions on irregular objects (Taco)

Join My telegram group: https://t.me/joinchat/N77M7xRvYUd403DgfE4TWw

IF you want to support my chan

Please join as a member in my channel to get additional benefits like materials in Data Science, live streaming for Members and many more

https://www.youtube.com/channe....l/UCNU_lfiiWBdtULKOw

Please do subscribe my other channel too

https://www.youtube.com/channe....l/UCjWY5hREA6FFYrthD

Connect with me here:

Twitter: https://twitter.com/Krishnaik06

Facebook: https://www.facebook.com/krishnaik06

instagram: https://www.instagram.com/krishnaik06

TechReview-TR10-JD_06

Our Popular courses:-

Fullstack data science job guaranteed program:- bit.ly/3JronjT

Tech Neuron OTT platform for Education:- bit.ly/3KsS3ye

Affiliate Portal (Refer & Earn):-

https://affiliate.ineuron.ai/

Internship Portal:-

https://internship.ineuron.ai/

Website:- www.ineuron.ai

iNeuron Youtube Channel:-

https://www.youtube.com/channe....l/UCb1GdqUqArXMQ3RS8

Telegram link: https://t.me/joinchat/N77M7xRvYUd403DgfE4TWw

Please do subscribe my other channel too

https://www.youtube.com/channe....l/UCjWY5hREA6FFYrthD

Connect with me here:

Twitter: https://twitter.com/Krishnaik06

Facebook: https://www.facebook.com/krishnaik06

instagram: https://www.instagram.com/krishnaik06

This video is a part of an online course that provides a comprehensive introduction to practial machine learning methods using MATLAB. In addition to short engaging videos, the course contains interactive, in-browser MATLAB projects.

Complete course is available here: http://bit.ly/2Djmuc3

Learn more about using MATLAB for machine learning: http://bit.ly/2O9Sujp

Get a free product Trial: https://goo.gl/ZHFb5u

Learn more about MATLAB: https://goo.gl/8QV7ZZ

Learn more about Simulink: https://goo.gl/nqnbLeSee What's new in MATLAB and Simulink: https://goo.gl/pgGtod

© 2018 The MathWorks, Inc. MATLAB and Simulink are registered

trademarks of The MathWorks, Inc.

See www.mathworks.com/trademarks for a list of additional trademarks. Other product or brand names maybe trademarks or registered trademarks of their respective holders.

This is a clip from a conversation with Bjarne Stroustrup from Nov 2019. New full episodes are released once or twice a week and 1-2 new clips or a new non-podcast video is released on all other days. You can watch the full conversation here: https://www.youtube.com/watch?v=uTxRF5ag27A

(more links below)

Podcast full episodes playlist:

https://www.youtube.com/playli....st?list=PLrAXtmErZgO

Podcasts clips playlist:

https://www.youtube.com/playli....st?list=PLrAXtmErZgO

Podcast website:

https://lexfridman.com/ai

Podcast on Apple Podcasts (iTunes):

https://apple.co/2lwqZIr

Podcast on Spotify:

https://spoti.fi/2nEwCF8

Podcast RSS:

https://lexfridman.com/category/ai/feed/

Note: I select clips with insights from these much longer conversation with the hope of helping make these ideas more accessible and discoverable. Ultimately, this podcast is a small side hobby for me with the goal of sharing and discussing ideas. I did a poll and 92% of people either liked or loved the posting of daily clips, 2% were indifferent, and 6% hated it, some suggesting that I post them on a separate YouTube channel. I hear the 6% and partially agree, so am torn about the whole thing. I tried creating a separate clips channel but the YouTube algorithm makes it very difficult for that channel to grow. So for a little while, I'll keep posting clips on this channel. I ask for your patience and to see these clips as supporting the dissemination of knowledge contained in nuanced discussion. If you enjoy it, consider subscribing, sharing, and commenting.

Bjarne Stroustrup is the creator of C++, a programming language that after 34 years is still one of the most popular and powerful languages in the world. Its focus on fast, stable, robust code underlies many of the biggest systems in the world that we have come to rely on as a society. If you're watching this on YouTube, many of the critical back-end component of YouTube are written in C++. Same goes for Google, Facebook, Amazon, Twitter, most Microsoft applications, Adobe applications, most database systems, and most physical systems that operate in the real-world like cars, robots, rockets that launch us into space and one day will land us on Mars.

Subscribe to this YouTube channel or connect on:

- Twitter: https://twitter.com/lexfridman

- LinkedIn: https://www.linkedin.com/in/lexfridman

- Facebook: https://www.facebook.com/lexfridman

- Instagram: https://www.instagram.com/lexfridman

- Medium: https://medium.com/@lexfridman

- Support on Patreon: https://www.patreon.com/lexfridman

Neural networks are the backbone of deep learning. In recent years, the Bayesian neural networks are gathering a lot of attention. Here we take a whistle-stop tour of the mathematics distinguishing Bayesian neural networks with the usual neural networks.

We're going to learn how to use deep learning to convert an image into the style of an artist that we choose. We'll go over the history of computer generated art, then dive into the details of how this process works and why deep learning does it so well.

Coding challenge for this video:

https://github.com/llSourcell/....How-to-Generate-Art-

Itai's winning code:

https://github.com/etai83/lstm_stock_prediction

Andreas' runner up code:

https://github.com/AndysDeepAb....stractions/How-to-Pr

More learning resources:

https://harishnarayanan.org/wr....iting/artistic-style

https://ml4a.github.io/ml4a/style_transfer/

http://genekogan.com/works/style-transfer/

https://arxiv.org/abs/1508.06576

https://jvns.ca/blog/2017/02/12/neural-style/

Style transfer apps:

http://www.pikazoapp.com/

http://deepart.io/

https://artisto.my.com/

https://prisma-ai.com/

Please subscribe! And like. And comment. That's what keeps me going.

Join us in the Wizards Slack channel:

http://wizards.herokuapp.com/

And please support me on Patreon:

https://www.patreon.com/user?u=3191693

Song at the beginning is called Everyday by Carly Comando

jurassic park inception visualization is from http://www.pyimagesearch.com/2....015/07/06/bat-countr

Follow me:

Twitter: https://twitter.com/sirajraval

Facebook: https://www.facebook.com/sirajology Instagram: https://www.instagram.com/sirajraval/ Instagram: https://www.instagram.com/sirajraval/

Signup for my newsletter for exciting updates in the field of AI:

https://goo.gl/FZzJ5w

Hit the Join button above to sign up to become a member of my channel for access to exclusive content!

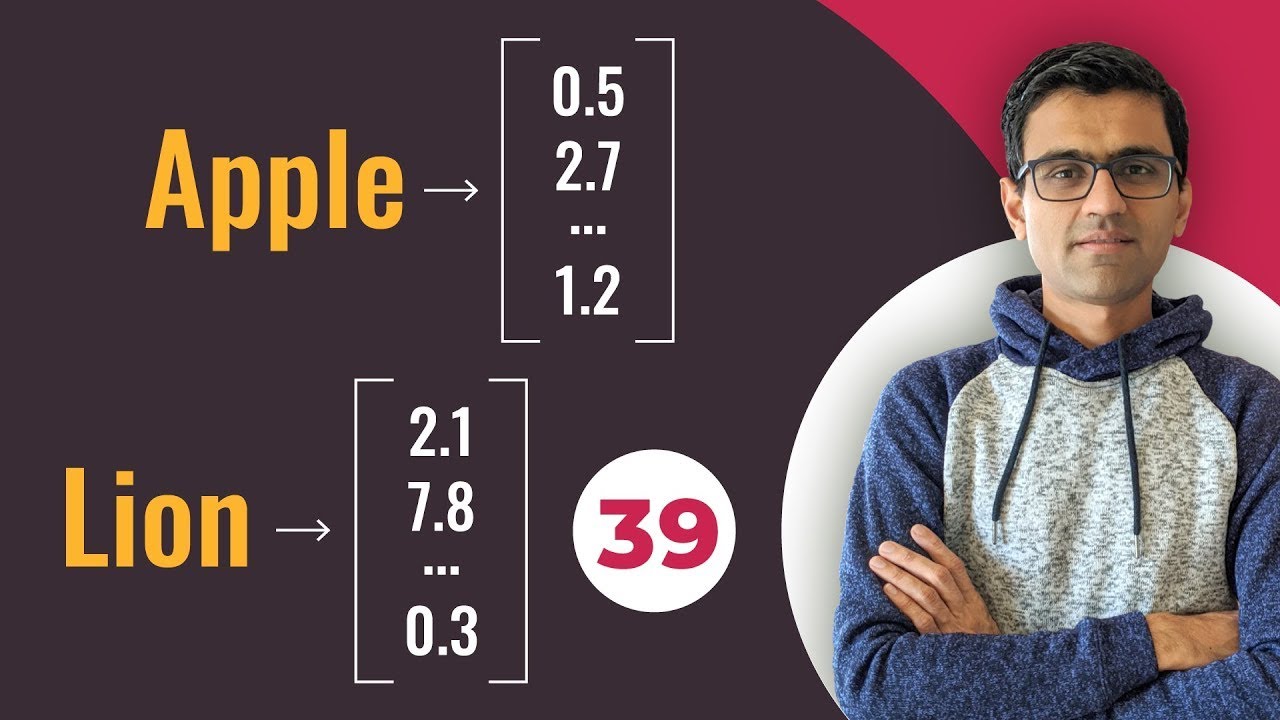

Machine learning models don't understand words. They should be converted to numbers before they are fed to RNN or any other machine learning model. In this tutorial, we will look into various techniques for converting words to numbers. These techniques are,

1) Using unique numbers

2) One hot encoding

3) Word embeddings

Deep learning playlist: https://www.youtube.com/playli....st?list=PLeo1K3hjS3u

Machine learning playlist : https://www.youtube.com/playli....st?list=PLeo1K3hjS3u

🔖Hashtags🔖

#wordembeddings #wordembeddingsexplained #deeplearningtutorial #convertingwordstonumbers #wordembedding #wordembeddingnlp #tensorflowwordembedding #wordembeddingwithnlp #nlpforwordembedding #wordembeddingkeras #whatiswordembedding #wordembeddingnlptutorial

Do you want to learn technology from me? Check https://codebasics.io/ for my affordable video courses.

🌎 Website: https://www.codebasics.io/

🎥 Codebasics Hindi channel: https://www.youtube.com/channe....l/UCTmFBhuhMibVoSfYo

#️⃣ Social Media #️⃣

🔗 Discord: https://discord.gg/r42Kbuk

📸 Instagram: https://www.instagram.com/codebasicshub/

🔊 Facebook: https://www.facebook.com/codebasicshub

📱 Twitter: https://twitter.com/codebasicshub

📝 Linkedin (Personal): https://www.linkedin.com/in/dhavalsays/

📝 Linkedin (Codebasics): https://www.linkedin.com/company/codebasics/

❗❗ DISCLAIMER: All opinions expressed in this video are of my own and not that of my employers'.

Zebra’s Deep Learning OCR Tool on the VS Camera Lineup is an incredible strong algorithm that works great on difficult to read low contrast characters. Setting up a job can be a tedious and time-consuming task, but with Aurora’s intuitive and user-friendly interface, it’s easy to setup, deploy and save time. Learn More: https://bit.ly/3BfJG5V