Top videos

Despite its popularity, machine vision is not the only Deep Learning application. Deep nets have started to take over text processing as well, beating every traditional method in terms of accuracy. They also are used extensively for cancer detection and medical imaging. When a data set has highly complex patterns, deep nets tend to be the optimal choice of model.

Demo URLs

Clarifai - http://www.clarifai.com

Metamind - https://www.metamind.io/language/twitter

As we have previously discussed, Deep Learning is used in many areas of machine vision. Facebook uses deep nets to detect faces from different angles, and the startup Clarifai uses these nets for object recognition. Other applications include scene parsing and vehicular vision for driverless cars.

Deep Learning TV on

Facebook: https://www.facebook.com/DeepLearningTV/

Twitter: https://twitter.com/deeplearningtv

Deep Nets are also starting to beat out other models in certain Natural Language Processing tasks like sentiment analysis, which can be seen with new tools like MetaMind. Recurrent nets can be used effectively in document classification and character-level text processing.

Deep Nets are even being used in the medical space. A Stanford team was able to use deep nets to identify 6,642 factors that help doctors better predict the chances of cancer survival. Researchers from IDSIA in Switzerland used a deep net to identify invasive breast cancer cells. In drug discovery, Merck hosted a deep learning challenge to predict the biological activity of molecules based on chemical structure.

In finance, deep nets are trained to make predictions based on market data streams, portfolio allocations, and risk profiles. In digital advertising, these nets are used to optimize the use of screen space, and to cluster users in order to offer personal ads. They are even used to detect fraud in real time, and to segment customers for upselling/cross-selling in a sales environment.

What is your favorite deep learning application? Please comment and share your thoughts.

Credits

Nickey Pickorita (YouTube art) -

https://www.upwork.com/freelan....cers/~0147b8991909b2

Isabel Descutner (Voice) -

https://www.youtube.com/user/IsabelDescutner

Dan Partynski (Copy Editing) -

https://www.linkedin.com/in/danielpartynski

Marek Scibior (Prezi creator, Illustrator) -

http://brawuroweprezentacje.pl/

Jagannath Rajagopal (Creator, Producer and Director) -

https://ca.linkedin.com/in/jagannathrajagopal

At Mirakl, we empower marketplaces with Artificial Intelligence solutions. Catalogs data is an extremely rich source of e-commerce sellers and marketplaces products which include images, descriptions, brands, prices and attributes (for example, size, gender, material or color). Such big volumes of data are suitable for training multimodal deep learning models and present several technical Machine Learning and MLOps challenges to tackle.

We will dive deep into two key use cases: deduplication and categorization of products. For categorization the creation of quality multimodal embeddings plays a crucial role and is achieved through experimentation of transfer learning techniques on state-of-the-art models. Finding very similar or almost identical products among millions and millions can be a very difficult problem and that is where our deduplication algorithm comes to bring a fast and computationally efficient solution.

Furthermore we will show how we deal with big volumes of products using robust and efficient pipelines, Spark for distributed and parallel computing, TFRecords to stream and ingest data optimally on multiple machines avoiding memory issues, and MLflow for tracking experiments and metrics of our models.

Connect with us:

Website: https://databricks.com

Facebook: https://www.facebook.com/databricksinc

Twitter: https://twitter.com/databricks

LinkedIn: https://www.linkedin.com/company/data...

Instagram: https://www.instagram.com/databricksinc/

With so many alternatives available, why are neural nets used for Deep Learning? Neural nets excel at complex pattern recognition and they can be trained quickly with GPUs.

Deep Learning TV on

Facebook: https://www.facebook.com/DeepLearningTV/

Twitter: https://twitter.com/deeplearningtv

Historically, computers have only been useful for tasks that we can explain with a detailed list of instructions. As such, they tend to fail in applications where the task at hand is fuzzy, such as recognizing patterns. Neural Networks fill this gap in our computational abilities by advancing machine perception – that is, they allow computers to start to making complex judgements about environmental inputs. Most of the recent hype in the field of AI has been due to progress in the application of deep neural networks.

Neural nets tend to be too computationally expensive for data with simple patterns; in such cases you should use a model like Logistic Regression or an SVM. As the pattern complexity increases, neural nets start to outperform other machine learning methods. At the highest levels of pattern complexity – high-resolution images for example – neural nets with a small number of layers will require a number of nodes that grows exponentially with the number of unique patterns. Even then, the net would likely take excessive time to train, or simply would fail to produce accurate results.

Have you ever had this problem in your own machine learning projects? Please comment.

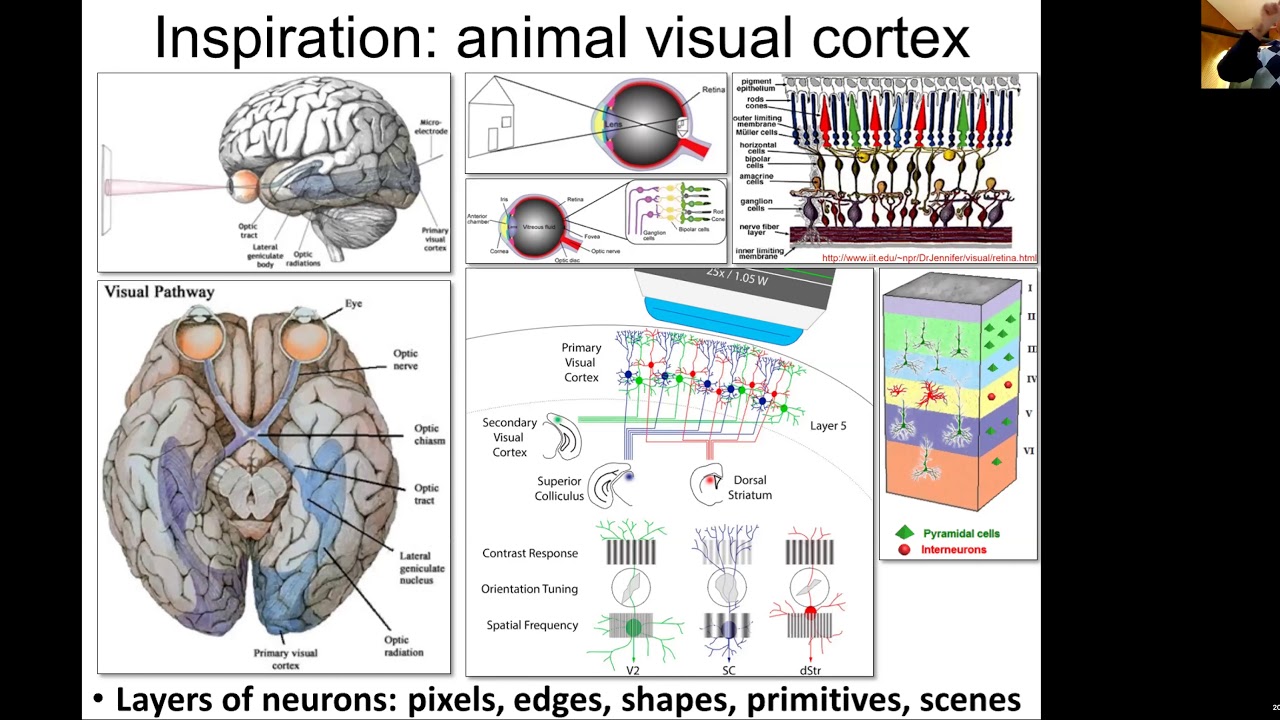

As a result, deep nets are essentially the only practical choice for highly complex patterns such as the human face. The reason is that different parts of the net can detect simpler patterns and then combine them together to detect a more complex pattern. For example, a convolutional net can detect simple features like edges, which can be combined to form facial features like the nose and eyes, which are then combined to form a face (Credit: Andrew Ng). Deep nets can do this accurately – in fact, a deep net from Google beat a human for the first time at pattern recognition.

However, the strength of deep nets is coupled with an important cost – computational power. The resources required to effectively train a deep net were prohibitive in the early years of neural networks. However, thanks to advances in high-performance GPUs of the last decade, this is no longer an issue. Complex nets that once would have taken months to train, now only take days.

Credits:

Nickey Pickorita (YouTube art)

https://www.upwork.com/freelan....cers/~0147b8991909b2

Isabel Descutner (Voice) -

https://www.youtube.com/user/IsabelDescutner

Dan Partynski (Copy Editing) -

https://www.linkedin.com/in/danielpartynski

Jagannath Rajagopal (Creator, Producer and Director) -

https://ca.linkedin.com/in/jagannathrajagopal