Latest videos

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3Depe55

Professor Christopher Manning, Stanford University

http://onlinehub.stanford.edu/

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

To follow along with the course schedule and syllabus, visit: http://web.stanford.edu/class/....cs224n/index.html#sc

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/ai

Associate Professor Percy Liang

Associate Professor of Computer Science and Statistics (courtesy)

https://profiles.stanford.edu/percy-liang

Assistant Professor Dorsa Sadigh

Assistant Professor in the Computer Science Department & Electrical Engineering Department

https://profiles.stanford.edu/dorsa-sadigh

To follow along with the course schedule and syllabus, visit:

https://stanford-cs221.github.....io/autumn2021/#sched

0:00 Introduction

0:06 Machine learning: differentiable programming

0:47 Deep learning models

1:24 Feedforward neural networks

4:23 Representing images

5:18 Convolutional neural networks

10:29 Representing natural language

11:51 Embedding tokens

13:01 Representing sequences

14:17 Recurrent neural networks

17:38 Collapsing to a single vector

19:33 Long-range dependencies

19:59 Attention mechanism

26:17 Layer normalization and residual connections

28:38 Transformer

31:30 Generating tokens

32:36 Generating sequences

33:46 Sequence-to-sequence models

35:22 Summary FeedForward Conv MaxPool

#artificialintelligence #machinelearning

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3Dev1Yj

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

To follow along with the course schedule and syllabus, visit: http://web.stanford.edu/class/....cs224n/index.html#sc

Lecture by Professor Andrew Ng for Machine Learning (CS 229) in the Stanford Computer Science department. Professor Ng lectures on expectation-maximization in the context of the mixture of Gaussian and naive Bayes models, as well as factor analysis and digression.

This course provides a broad introduction to machine learning and statistical pattern recognition. Topics include supervised learning, unsupervised learning, learning theory, reinforcement learning and adaptive control. Recent applications of machine learning, such as to robotic control, data mining, autonomous navigation, bioinformatics, speech recognition, and text and web data processing are also discussed.

Complete Playlist for the Course:

http://www.youtube.com/view_pl....ay_list?p=A89DCFA6AD

CS 229 Course Website:

http://www.stanford.edu/class/cs229/

Stanford University:

http://www.stanford.edu/

Stanford University Channel on YouTube:

http://www.youtube.com/stanford

Lecture by Professor Andrew Ng for Machine Learning (CS 229) in the Stanford Computer Science department. Professor Ng discusses state action rewards, linear dynamical systems in the context of linear quadratic regulation, models, and the Riccati equation, and finite horizon MDPs.

This course provides a broad introduction to machine learning and statistical pattern recognition. Topics include supervised learning, unsupervised learning, learning theory, reinforcement learning and adaptive control. Recent applications of machine learning, such as to robotic control, data mining, autonomous navigation, bioinformatics, speech recognition, and text and web data processing are also discussed.

Complete Playlist for the Course:

http://www.youtube.com/view_pl....ay_list?p=A89DCFA6AD

CS 229 Course Website:

http://www.stanford.edu/class/cs229/

Stanford University:

http://www.stanford.edu/

Stanford University Channel on YouTube:

http://www.youtube.com/stanford

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/30l2Kkw

Professor Christopher Manning, Stanford University

http://onlinehub.stanford.edu/

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

To follow along with the course schedule and syllabus, visit: http://web.stanford.edu/class/....cs224n/index.html#sc

0:00 Introduction

0:23 Announcements

2:00 What is Coreference Resolution?

12:04 Applications

16:40 Coreference Resolution in Two Steps

19:39 Mention Detection: Not so Simple

22:19 Can we avoid a pipelined system?

23:39 4. On to Coreference! First, some linguistics

26:11 Anaphora vs Coreference

27:59 Anaphora vs. Coreference

29:24 Anaphora vs. Cataphora

32:33 Four kinds of Coreference Models

34:43 Hobbs Algorithm Example

40:07 Knowledge-based Pronominal Coreference

45:01 Hobbs' algorithm: commentary

46:06 Coreference Models: Mention Pair

47:33 Mention Pair Training

48:05 Mention Pair Test Time

52:01 7. Coreference Models: Mention Ranking

54:57 Coreference Models: Training

56:05 Mention Ranking Models: Test Time

56:49 A. Non-Neural Coref Model: Features

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3Cfhyya

Professor Christopher Manning & PhD Candidate Abigail See, Stanford University

http://onlinehub.stanford.edu/

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

To follow along with the course schedule and syllabus, visit: http://web.stanford.edu/class/....cs224n/index.html#sc

0:00 Introduction

0:27 Announcements

1:09 Overview

2:47 Natural Language Generation (NLG)

5:00 Recap: training a (conditional) RNN-LM

6:21 Recap: decoding algorithms

6:47 Recap: greedy decoding

7:32 Recap: beam search decoding

8:57 Aside: Do the hosts in Westworld use beam search?

10:07 What's the effect of changing beam size k?

13:16 Effect of beam size in chitchat dialogue

15:58 Sampling-based decoding

18:22 Softmax temperature

21:03 Decoding algorithms: in summary

22:55 Summarization: task definition

27:04 Summarization: two main strategies

28:20 Pre-neural summarization

31:00 Summarization evaluation: ROUGE

35:20 Neural summarization (2015-present)

38:53 Neural summarization: copy mechanisms

42:58 Neural summarization: better content selection

43:47 Bottom-up summarization

45:46 Neural summarization via Reinforcement Learning

49:36 Pre- and post-neural dialogue

50:56 Seq2seq-based dialogue

52:36 Irrelevant response problem

54:19 Genericness / boring response problem

56:38 Repetition problem

59:12 Storytelling

59:35 Generating a story from an image

Precision Health is a fundamental shift to more proactive and personalized health care that empowers people to lead healthy lives. It is in this spirit of possibility and promise that Stanford Medicine hosted the sixth year of this conference in 2018, bringing together international researchers and leaders from academia, health care, government, and industry to develop actionable steps for improving human health.

Panelists on a panel about meaningful use of deep/machine learning in medicine included:

* Katherine Chou, Google

* Kevin Lyman, Enlitic

* Jenna Wiens, University of Michigan

* Natalie Pageler, Stanford

Moderator: Serena Yeung, Stanford

For more information, visit: https://bigdata.stanford.edu/

Watch the original Lecture here: https://www.youtube.com/watch?v=UzxYlbK2c7E

Lecture by Professor Andrew Ng for Machine Learning (CS 229) in the Stanford Computer Science department. Professor Ng provides an overview of the course in this introductory meeting.

This course provides a broad introduction to machine learning and statistical pattern recognition. Topics include supervised learning, unsupervised learning, learning theory, reinforcement learning and adaptive control. Recent applications of machine learning, such as to robotic control, data mining, autonomous navigation, bioinformatics, speech recognition, and text and web data processing are also discussed.

Complete Playlist for the Course:

http://www.youtube.com/view_play_list...

CS 229 Course Website:

http://www.stanford.edu/class/cs229/

Stanford University:

http://www.stanford.edu/

Stanford University Channel on YouTube:

http://www.youtube.com/stanford

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3Cbr1GI

Professor Christopher Manning & Guest Speaker Kevin Clark, Stanford University

http://onlinehub.stanford.edu/

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

To follow along with the course schedule and syllabus, visit: http://web.stanford.edu/class/....cs224n/index.html#sc

0:00 Introduction

0:56 Deep Learning for NLP 5 years ago

1:34 Future of Deep Learning + NLP

3:32 Why has deep learning been so successful recently?

5:03 Big deep learning successes

6:20 NLP Datasets

8:12 Machine Translation Data

9:54 Pre-Training

12:10 Self-Training

18:27 Large-Scale Back-Translation

20:03 Unsupervised Word Translation

27:43 Unsupervised Neural Machine Translation

31:14 Why Does This Work?

33:46 Unsupervised Machine Translation

34:49 Attribute Transfer

38:10 Cross-Lingual BERT

43:03 Huge Models in Computer Vision

44:29 Training Huge Models

47:41 So What Can GPT-2 Do?

50:04 GPT-2 Results

51:16 How can GPT-2 be doing translation?

52:12 GPT-2 Question Answering

53:31 What happens as models get even bigger?

54:24 GPT-2 Reaction

59:26 High-Impact Decisions

Dr. Matthew Lungren presents labeling work using deep learning at the NIH AI Radiology workshop 2018

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3qAoAeO

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

To follow along with the course schedule and syllabus, visit: http://web.stanford.edu/class/....cs224n/index.html#sc

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3BcmeEA

Jure Leskovec

Computer Science, PhD

Having defined a GNN layer, the next design step is how to stack GNN layers together. To motivate different ways of stacking GNN layers, we first introduce the issue of over-smoothing that prevents GNNs learning meaningful node embeddings. We learn 2 lessons from the problem of over-smoothing: (1) We should be cautious when adding GNN layers; (2) we can add skip connections in GNNs to alleviate the over-smoothing problem. When the number of GNN layers is small, we can enhance the expressiveness of GNN by creating multi-layer message / aggregation computation, or adding pre-processing / post-processing layers in the GNN.

To follow along with the course schedule and syllabus, visit:

http://web.stanford.edu/class/cs224w/

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/2ZB72nu

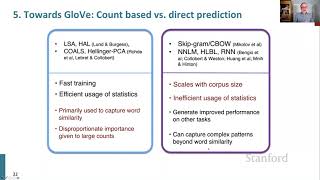

Lecture 2: Word Vectors, Word Senses, and Neural Network Classifiers

1. Course organization (2 mins)

2. Finish looking at word vectors and word2vec (13 mins)

3. Can we capture the essence of word meaning more effectively by counting? (8m)

4. The GloVe model of word vectors (8 min)

5. Evaluating word vectors (14 mins)

6. Word senses (8 mins)

7. Review of classification and how neural nets differ (8 mins)

8. Introducing neural networks (14 mins)

To learn more about this course visit: https://online.stanford.edu/co....urses/cs224n-natural

To follow along with the course schedule and syllabus visit: http://web.stanford.edu/class/cs224n/

Professor Christopher Manning

Thomas M. Siebel Professor in Machine Learning, Professor of Linguistics and of Computer Science

Director, Stanford Artificial Intelligence Laboratory (SAIL)

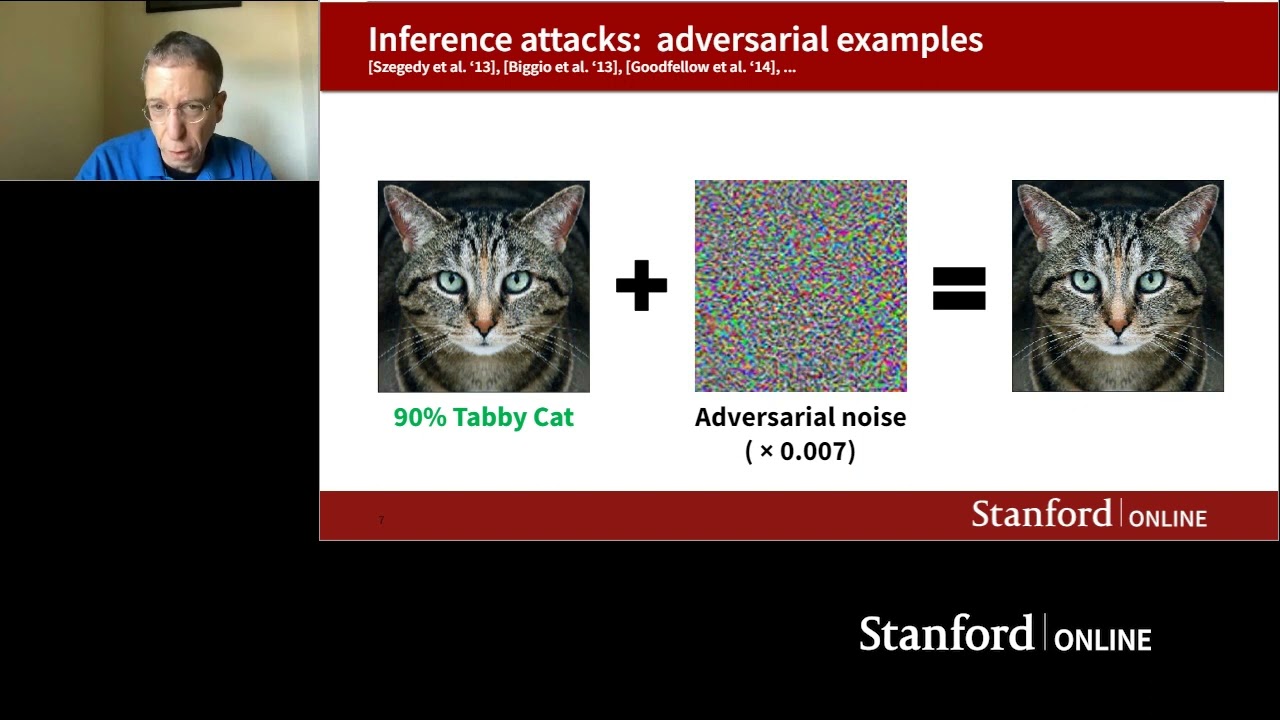

In this webinar, Professor Dan Boneh discusses recent work at the intersection of cybersecurity and machine learning. Specifically, he explores an area known as “adversarial machine learning” which looks at the stability of machine learning models in the presence of adversarial behavior.

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/2Z3aQ0f

#artificialintelligence #machinelearning

Lecture by Professor Andrew Ng for Machine Learning (CS 229) in the Stanford Computer Science department. Professor Ng lectures on the debugging process, linear quadratic regulation, Kalmer filters, and linear quadratic Gaussian in the context of reinforcement learning.

This course provides a broad introduction to machine learning and statistical pattern recognition. Topics include supervised learning, unsupervised learning, learning theory, reinforcement learning and adaptive control. Recent applications of machine learning, such as to robotic control, data mining, autonomous navigation, bioinformatics, speech recognition, and text and web data processing are also discussed.

Complete Playlist for the Course:

http://www.youtube.com/view_pl....ay_list?p=A89DCFA6AD

CS 229 Course Website:

http://www.stanford.edu/class/cs229/

Stanford University:

http://www.stanford.edu/

Stanford University Channel on YouTube:

http://www.youtube.com/stanford