Latest videos

Improving Language Understanding by Generative Pre-Training

Course Materials: https://github.com/maziarraiss....i/Applied-Deep-Learn

In this short Python tutorial, You will learn how to build your own Gradio App for Text Generation / Natural Language generation using GPT-J-6B that's available on Hugging Face Transformers. Eleuther AI's GPT-J-6B is the real open-source alternative for GPT-3 by OpenAI. Using that from Hugging Face Model Hub, We'll learn how to build a Full-stack Web App using Gradio - surprisingly in just 6 lines of Python code, you'd have a world-class text generation App up and running.

GPT-J-6B on Hugging Face - https://huggingface.co/EleutherAI/gpt-j-6B

GPT-J-6B Web App on HF Spaces - https://huggingface.co/spaces/akhaliq/gpt-j-6B

Gradio Docs - https://gradio.app/docs#load

Gradio Hosted - https://gradio.app/introducing-hosted

Courtesy: Ahsen Khaliq

Previous Video on GPT-J and AI Blog Writing:

https://youtu.be/fnvQ8qQM6NU

GPT-2, the Language model that shocked the world with its entirely fictitious story about the unicorns inhabiting a secret South American valley. Rob Miles explains

More on GPT-2: Coming Soon

More from Rob Miles: http://bit.ly/Rob_Miles_YouTube

Thanks to Nottingham Hackspace for providing the filming location: http://bit.ly/notthack

https://www.facebook.com/computerphile

https://twitter.com/computer_phile

This video was filmed and edited by Sean Riley.

Computer Science at the University of Nottingham: https://bit.ly/nottscomputer

Computerphile is a sister project to Brady Haran's Numberphile. More at http://www.bradyharan.com

We are going to talk about the internal workings of ChatGPT and the fundamental concepts it lies on: Language Models, Transformer Neural Networks, GPT models and Reinforcement Learning

ABOUT ME

⭕ Subscribe: https://www.youtube.com/c/Code....Emporium?sub_confirm

📚 Medium Blog: https://medium.com/@dataemporium

💻 Github: https://github.com/ajhalthor

👔 LinkedIn: https://www.linkedin.com/in/ajay-halthor-477974bb/

Transformer Neural Networks: https://www.youtube.com/watch?v=TQQlZhbC5ps

RESOURCES

[1] ChatGPT blog: https://openai.com/blog/chatgpt/

[2] Instruct GPT which is the model ChatGPT was modeled after: https://arxiv.org/pdf/2203.02155.pdf

[3] Proximal Policy Optimization is how ChatGPT makes use of human rankings to update model parameters and make it more "safe" and "truthful": https://openai.com/blog/openai-baselines-ppo/

[4] Here is a paper that shows how Reinforcement learning through human feedback actually helps: https://arxiv.org/pdf/2009.01325.pdf

[5] Every timestep, a subword token is generated. Here is some more information on this process with BPE: https://towardsdatascience.com..../byte-pair-encoding-

[6] Basic Concepts in Reinforcement Learning: https://www.baeldung.com/cs/ml....-policy-reinforcemen

[7] Why Does GPT-3 write non-sensical stuff that sounds legit? https://www.alignmentforum.org..../posts/BgoKdAzogxmgk

[8] What is GPT-3.5? https://beta.openai.com/docs/m....odel-index-for-resea

MATH COURSES (7 day free trial)

📕 Mathematics for Machine Learning: https://imp.i384100.net/MathML

📕 Calculus: https://imp.i384100.net/Calculus

📕 Statistics for Data Science: https://imp.i384100.net/AdvancedStatistics

📕 Bayesian Statistics: https://imp.i384100.net/BayesianStatistics

📕 Linear Algebra: https://imp.i384100.net/LinearAlgebra

📕 Probability: https://imp.i384100.net/Probability

OTHER RELATED COURSES (7 day free trial)

📕 ⭐ Deep Learning Specialization: https://imp.i384100.net/Deep-Learning

📕 Python for Everybody: https://imp.i384100.net/python

📕 MLOps Course: https://imp.i384100.net/MLOps

📕 Natural Language Processing (NLP): https://imp.i384100.net/NLP

📕 Machine Learning in Production: https://imp.i384100.net/MLProduction

📕 Data Science Specialization: https://imp.i384100.net/DataScience

📕 Tensorflow: https://imp.i384100.net/Tensorflow

IMAGE RESOURCES

[1] Mouth I used in thumbnail: https://www.vecteezy.com/free-vector/cartoon-mouth

¿Qué hay tras el entrenamiento de IAs como GPT-3, Alphafold 2 o DALL-E? ¿Qué hace especial a sus redes neuronales? Los Transformers son el tipo de arquitectura de Deep Learning que mejor rendimiento ha dado en los últimos años. ¿Pero por qué? ¿Qué los hacen tan especiales? La respuesta la encontramos en lo altamente paralelizable que es su arquitectura, que permite sacar el máximo partido a los procesadores multinúcleos. Pero, esto tiene un coste, y es que si no hacemos nada los Transformers serían incapaces de entender el orden de los datos con los que los entrenamos. Y de ahí la importancia de soluciones como los Encoding de Posicionamiento. ¡Veamos cómo funcionan!

--- 📣 ¡IMPORTANTE! ---

► ¡Regístrate al Samsung Dev Day y no te pierdas mi ponencia!

https://bit.ly/SDD2021Agenda - 18 Noviembre, 18:30

--- ¡LINKS INTERESANTES! ---

► Serie Introducción al NLP y Transformers (DotCSV)

Parte 1 - https://youtu.be/Tg1MjMIVArc

Parte 2 - https://youtu.be/RkYuH_K7Fx4

Parte 3 - https://youtu.be/aL-EmKuB078

► Explicación con más detalle Positional Encoding:

https://kazemnejad.com/blog/tr....ansformer_architectu

--- ¡MÁS DOTCSV! ----

📣 NotCSV - ¡Canal Secundario!

https://www.youtube.com/c/notcsv

💸 Patreon : https://www.patreon.com/dotcsv

👓 Facebook : https://www.facebook.com/AI.dotCSV/

👾 Twitch!!! : https://www.twitch.tv/dotcsv

🐥 Twitter : https://twitter.com/dotCSV

📸 Instagram : https://www.instagram.com/dotcsv/

-- ¡MÁS CIENCIA! ---

🔬 Este canal forma parte de la red de divulgación de SCENIO. Si quieres conocer otros fantásticos proyectos de divulgación entra aquí:

http://scenio.es/colaboradores

What will the future of AI programming look like with tools like ChatGPT and GitHub Copilot? Let's take a look at how machine learning could change the daily lives of developers in the near future.

#ai #tech #TheCodeReport

💬 Chat with Me on Discord

https://discord.gg/fireship

🔗 Resources

ChatGPT Demo https://openai.com/blog/chatgpt

Codex CLI https://github.com/microsoft/Codex-CLI

Tech Trends to Watch in 2023 https://youtu.be/1v_TEnpqHXE

🔥 Get More Content - Upgrade to PRO

Upgrade at https://fireship.io/pro

Use code YT25 for 25% off PRO access

🎨 My Editor Settings

- Atom One Dark

- vscode-icons

- Fira Code Font

🔖 Topics Covered

- How will ChatGPT affect programmers?

- Is AI going to replace software engineers?

- The future of AI programming tools?

- Universal AI programming language

- Using AI from the command line

Join My Weekly Newsletter and Learn How to Use Chat GPT in Your Everyday Life: https://bit.ly/aiadvantagenewsletter

A quick beginner's guide on how to start using Chat GPT by OpenAI.

https://chat.openai.com/

---------------------------------------------------------------------------------

Discord: https://discord.gg/zrsGvwJ7RZ

Twitter: https://twitter.com/igorpogany

---------------------------------------------------------------------------------

VIDEO EQUIPMENT I USE:

https://kit.co/igor_p/igor-s-video-equipment

---------------------------------------------------------------------------------

I’m NOT a financial adviser. These are only my own personal and speculative opinions. This channel is purely for entertainment purposes only!

---------------------------------------------------------------------------------

#chatgpt #howtousechatgpt #chatbotopenai

google colab link

https://colab.research.google.....com/drive/1xyaAMav_g

🤗 Transformers (formerly known as pytorch-transformers and pytorch-pretrained-bert) provides general-purpose architectures (BERT, GPT-2, RoBERTa, XLM, DistilBert, XLNet…) for Natural Language Understanding (NLU) and Natural Language Generation (NLG) with over 32+ pretrained models in 100+ languages and deep interoperability between Jax, PyTorch and TensorFlow.

-----------------------------------------------------------------------------------------------------------------------

⭐ Kite is a free AI-powered coding assistant that will help you code faster and smarter. The Kite plugin integrates with all the top editors and IDEs to give you smart completions and documentation while you’re typing. I've been using Kite for a few months and I love it! https://www.kite.com/get-kite/?utm_medium=referral&utm_source=youtube&utm_campaign=krishnaik&utm_content=description-only

Subscribe my vlogging channel

https://www.youtube.com/channe....l/UCjWY5hREA6FFYrthD

Please donate if you want to support the channel through GPay UPID,

Gpay: krishnaik06@okicici

Telegram link: https://t.me/joinchat/N77M7xRvYUd403DgfE4TWw

Please join as a member in my channel to get additional benefits like materials in Data Science, live streaming for Members and many more

https://www.youtube.com/channe....l/UCNU_lfiiWBdtULKOw

Connect with me here:

Twitter: https://twitter.com/Krishnaik06

Facebook: https://www.facebook.com/krishnaik06

instagram: https://www.instagram.com/krishnaik06

CS596 Machine Learning, Spring 2021

Yang Xu, Assistant Professor of Computer Science

College of Sciences

San Diego State University

Website: clcs.sdsu.edu

Easy natural language generation with Transformers and PyTorch. We apply OpenAI's GPT-2 model to generate text in just a few lines of Python code.

Language generation is one of those natural language tasks that can really produce an incredible feeling of awe at how far the fields of machine learning and artificial intelligence have come.

GPT-1, 2, and 3 are OpenAI’s top language models — well known for their ability to produce incredibly natural, coherent, and genuinely interesting language.

In this article, we will take a small snippet of text and learn how to feed that into a pre-trained GPT-2 model using PyTorch and Transformers to produce high-quality language generation in just eight lines of code. We cover:

PyTorch and Transformers

- Data

Building the Model

- Initialization

- Tokenization

- Generation

- Decoding

Results

🤖 70% Discount on the NLP With Transformers in Python course:

https://bit.ly/3DFvvY5

Medium Article:

https://towardsdatascience.com..../text-generation-wit

Friend Link (free access):

https://towardsdatascience.com..../text-generation-wit?sk=930367d835f15abb4ef3164f7791e1b1

Thumbnail background by gustavo centurion on Unsplash

https://unsplash.com/photos/O6fs4ablxw8

What if you want to leverage the power of GPT-3, but don't want to wait for Open-AI to approve your application? Introducing GPT-Neo, an open-source Transformer model that resembles GPT-3 both in terms of design and performance.In this video, we'll discuss how to implement and train GPT-Neo with just a few lines of code.

We'll use Happy Transformer to implement GPT-Neo. Happy Transformer is an open-source Python library build on top of Hugging Face's Transformer library to allow programmers to implement state-of-the-art NLP models with just a few lines of code.

Thank you Eleuther AI for creating and training GPT-Neo.

New Course on how to create a web app to display GPT-Neo: https://www.udemy.com/course/n....lp-text-generation-p

Article: https://www.vennify.ai/gpt-neo-made-easy/

Colab: https://colab.research.google.....com/drive/1Bg3hnPOoy

Website: https://www.vennify.ai/

LinkedIn business: www.linkedin.com/company/69285475

LinkedIn personal: https://www.linkedin.com/in/ericfillion/

Support Happy Transformer by giving it a star: https://github.com/EricFillion/happy-transformer

Happy Transformer's website: https://happytransformer.com/

Read my latest content, support me, and get a full membership to Medium by signing up to Medium with this link: https://medium.com/@ericfillion/membership

Music: www.bensound.com

Control codes to steer your language models into a right direction.

✅ CTRL: A Conditional Transformer Language Model for Controllable Generation from Salesforce: https://arxiv.org/abs/1909.05858

✅ PPLM from Uber: https://eng.uber.com/pplm/

✅ My new Artificial Intelligence Book is out: https://amzn.to/37kBr99

🔥 Contentyze, a content generation platform: https://contentyze.com/

#salesforce #machinelearning #transformer

This video explains the Transformer architecture in a very detailed way, including most math formulas in the paper, and the neural network operations behind it. The Transformer is the foundation of many powerful language models like BERT, GPT3, RoBERTa, XLNET, ELECTRA, T5. Understanding how it works in detail might help you modify, optimize, or improve it in the way you want.

Connect

Linkedin https://www.linkedin.com/in/xue-yong-fu-955723a6/

Twitter https://twitter.com/home

Email edwindeeplearning@gmail.com

0:00 - Intro

1:10 - Architecture overview

1:56 - Encoder

3:58 - Residual connection & layer normalization

6:59 - Decoder

11:14 - Attention mechanism

14:30 - Scaled dot-product attention

20:22 - Learned projection layers

26:32 - Multi-head attention

28-39 - Encoder-decoder attention

31:18 - Encoder self-attention

31:34 - Decoder self-attention

33:58 - Position-wise feedforward network

36:41 - Word embedding

39:34 - Positional encoding

47:37 - Why self-attention

What Is GPT-3 Series

https://www.youtube.com/playli....st?list=PLoS8jSwcU-c

Paper: Attention Is All You Need

https://arxiv.org/abs/1706.03762

Abstract

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best

performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 English- to-German translation task, improving over the existing best results, including ensembles, by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.0 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature. We show that the Transformer generalizes well to other tasks by applying it successfully to English constituency parsing both with large and limited training data.

GPT-J-6B - Just like GPT-3 but you can actually download the weights and run it at home. No API sign-up required, unlike some other models we could mention, right? Any just like GPT-3, this has 3 super easy example ways you can run this -v ia the EleutherAI website, Google Colab TPU or single GPU on a local system.

Buy art & support a nerd!

https://t.co/kAUP0Vfq48?amp=1

Github: https://github.com/kingoflolz/mesh-transformer-jax

Requirements for this guide to running locally:

* Ubuntu 20.04

* 32 GB RAM and an Nvidia GPU with at least 24 GB VRAM

* Anaconda - https://www.anaconda.com/products/individual

== Python virtual environment (Anaconda) ==

conda create --name mesh-transformer-jax python=3.8

conda activate mesh-transformer-jax

== Download & install ==

git clone https://github.com/kingoflolz/mesh-transformer-jax.git

cd mesh-transformer-jax

sudo apt install zstd

wget https://the-eye.eu/public/AI/G....PT-J-6B/step_383500_

tar -I zstd -xf step_383500_slim.tar.zstd

pip install torch==1.8.1+cu111 torchvision==0.9.1+cu111 torchaudio==0.8.1 -f https://download.pytorch.org/whl/torch_stable.html

pip install jaxlib==0.1.67+cuda111 -f https://storage.googleapis.com..../jax-releases/jax_re

pip install -r requirements.txt # (Edited to use tensorflow-gpu 2.5.0 for no reason)

pip install jax==0.2.12

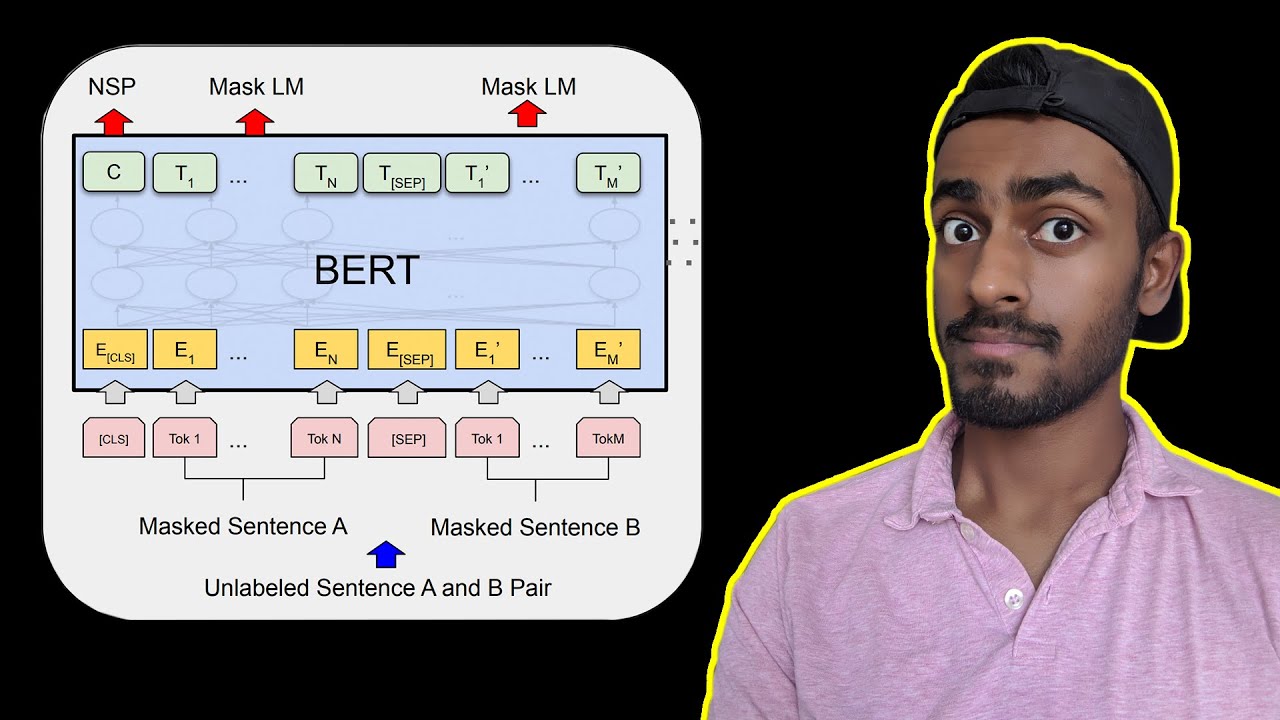

Understand the BERT Transformer in and out.

Follow me on M E D I U M: https://towardsdatascience.com..../likelihood-probabil

Please subscribe to keep me alive: https://www.youtube.com/c/Code....Emporium?sub_confirm

INVESTING: (You can get 3 free stocks setting up a webull account today): https://a.webull.com/8XVa1znjYxio6ESdff

⭐ Coursera Plus: $100 off until September 29th, 2022 for access to 7000+ courses: https://imp.i384100.net/Coursera-Plus

MATH COURSES (7 day free trial)

📕 Mathematics for Machine Learning: https://imp.i384100.net/MathML

📕 Calculus: https://imp.i384100.net/Calculus

📕 Statistics for Data Science: https://imp.i384100.net/AdvancedStatistics

📕 Bayesian Statistics: https://imp.i384100.net/BayesianStatistics

📕 Linear Algebra: https://imp.i384100.net/LinearAlgebra

📕 Probability: https://imp.i384100.net/Probability

OTHER RELATED COURSES (7 day free trial)

📕 ⭐ Deep Learning Specialization: https://imp.i384100.net/Deep-Learning

📕 Python for Everybody: https://imp.i384100.net/python

📕 MLOps Course: https://imp.i384100.net/MLOps

📕 Natural Language Processing (NLP): https://imp.i384100.net/NLP

📕 Machine Learning in Production: https://imp.i384100.net/MLProduction

📕 Data Science Specialization: https://imp.i384100.net/DataScience

📕 Tensorflow: https://imp.i384100.net/Tensorflow

REFERENCES

[1] BERT main paper: https://arxiv.org/pdf/1810.04805.pdf

[1] BERT in google search: https://blog.google/products/s....earch/search-languag

[2] Overview of BERT: https://arxiv.org/pdf/2002.12327v1.pdf

[4] BERT word embeddings explained: https://medium.com/@_init_/why....-bert-has-3-embeddin

[5] More details of BERT in this amazing blog: https://towardsdatascience.com..../bert-explained-stat

[6] Stanford lecture slides on BERT: https://nlp.stanford.edu/semin....ar/details/jdevlin.p

GPT-3 is the biggest language ever model built, and it has been attracting a lot of attention. Rather than argue about whether GPT-3 is overhyped or not, we wanted to dig in to the literature and understand what GPT-3 is (and is not) in light of it’s predecessors and alternative transformer models. In this video we share some of what we’ve learned. What is GPT-3 really good at? What are its constraints? How useful is it for business? Enjoy!

⏰ Time Stamps ⏰

00:40 - Comparison of latest Natural Language Processing Models

01:09 - What is a Transformer Model

01:50 - The Two Types of Transformer Models

02:15 - Difference between bi-directional encoders (BERT) and autoregressive decoders (GPT)

04:40 - GPT-3 is HUGE, does size matter?

05:24 - Presentation of size differences between GPT-3 relative to BERT, RoBERTa, GPT-2, and T5

07:40 - What does GPT do and how is it different than the BERT family?

18:05 - Is GPT-3 a Child Prodigy or a Parlor Trick?

18:44 - Back to the Issue of GPT-3's size

19:30 - Final thoughts on GPT-3 vs BERT