Latest videos

ERRATA:

In the "original transformer" (slide 51), in the source attention, the key and value come from the encoder, and the query comes from the decoder.

In this lecture we look at the details of some famous transformer models. How were they trained, and what could they do after they were trained.

slides: https://dlvu.github.io/slides/dlvu.lecture12.pdf

course website: https://dlvu.github.io

Lecturer: Peter Bloem

In this video, we are going to implement the GPT2 model from scratch. We are only going to focus on the inference and not on the training logic. We will cover concepts like self attention, decoder blocks and generating new tokens.

Paper: https://openai.com/blog/better-language-models/

Code minGPT: https://github.com/karpathy/minGPT

Code transformers: https://github.com/huggingface..../transformers/blob/0

Code from the video: https://github.com/jankrepl/mi....ldlyoverfitted/tree/

00:00 Intro

01:32 Overview: Main goal [slides]

02:06 Overview: Forward pass [slides]

03:39 Overview: GPT module (part 1) [slides]

04:28 Overview: GPT module (part 2) [slides]

05:25 Overview: Decoder block [slides]

06:10 Overview: Masked self attention [slides]

07:52 Decoder module [code]

13:40 GPT module [code]

18:19 Copying a tensor [code]

19:26 Copying a Decoder module [code]

21:04 Copying a GPT module [code]

22:13 Checking if copying works [code]

26:01 Generating token strategies [demo]

29:10 Generating a token function [code]

32:34 Script (copying + generating) [code]

35:59 Results: Running the script [demo]

40:50 Outro

If you have any video suggestions or you just wanna chat feel free to join the discord server: https://discord.gg/a8Va9tZsG5

Twitter: https://twitter.com/moverfitted

Credits logo animation

Title: Conjungation · Author: Uncle Milk · Source: https://soundcloud.com/unclemilk · License: https://creativecommons.org/licenses/... · Download (9MB): https://auboutdufil.com/?id=600

Learn more about Transformers → http://ibm.biz/ML-Transformers

Learn more about AI → http://ibm.biz/more-about-ai

Check out IBM Watson → http://ibm.biz/more-about-watson

Transformers? In this case, we're talking about a machine learning model, and in this video Martin Keen explains what transformers are, what they're good for, and maybe ... what they're not so good at for.

Download a free AI ebook → http://ibm.biz/ai-ebook-free

Read about the Journey to AI → http://ibm.biz/ai-journey-blog

Get started for free on IBM Cloud → http://ibm.biz/Bdf7QA

Subscribe to see more videos like this in the future → http://ibm.biz/subscribe-now

#AI #Software #ITModernization

Slides: https://sebastianraschka.com/p....df/lecture-notes/sta

-------

This video is part of my Introduction of Deep Learning course.

Next video: https://youtu.be/_BFp4kjSB-I

The complete playlist: https://www.youtube.com/playli....st?list=PLTKMiZHVd_2

A handy overview page with links to the materials: https://sebastianraschka.com/b....log/2021/dl-course.h

-------

If you want to be notified about future videos, please consider subscribing to my channel: https://youtube.com/c/SebastianRaschka

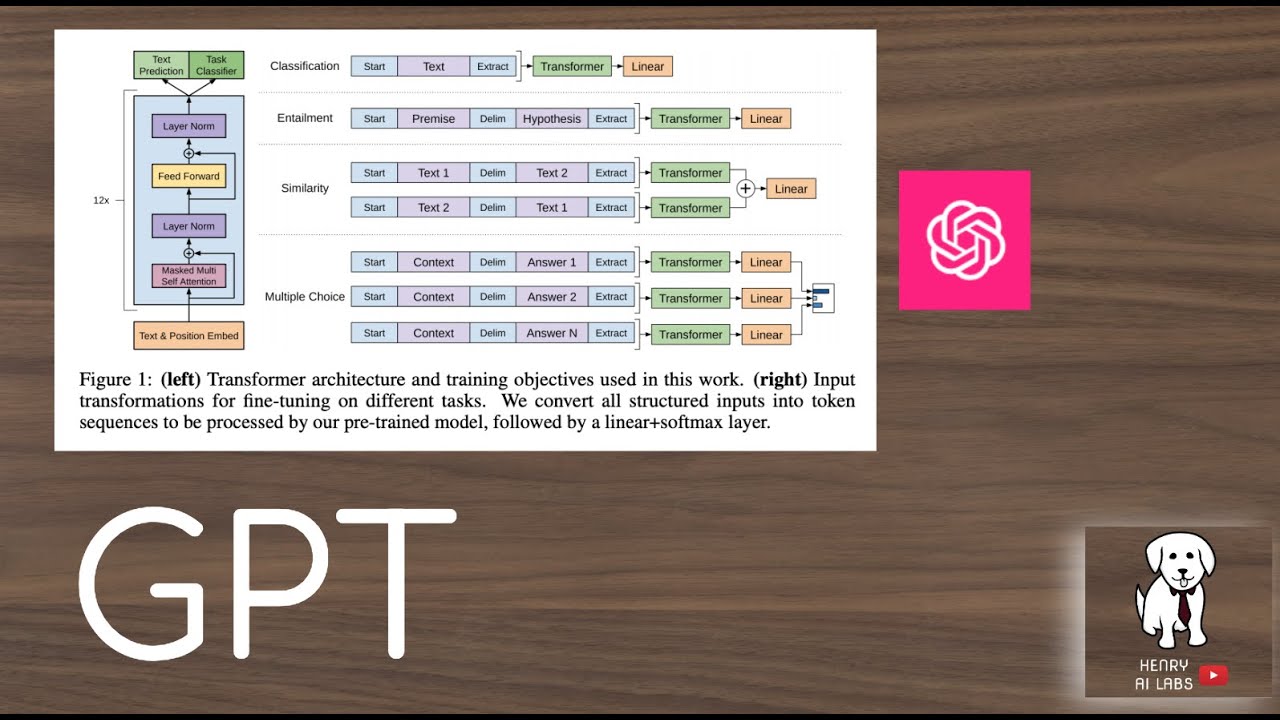

This video explains the original GPT model, "Improving Language Understanding by Generative Pre-Training". I think the key takeaways are understanding that they use a new unlabeled text dataset that requires the pre-training language modeling to incorporate longer range context, the way that they format input representations for supervised fine-tuning, and the different NLP tasks this is evaluated on!

Paper Links:

GPT: https://s3-us-west-2.amazonaws.....com/openai-assets/r

DeepMind "A new model and dataset for long range memory": https://deepmind.com/blog/arti....cle/A_new_model_and_

SQuAD: https://rajpurkar.github.io/SQuAD-explorer/explore/v2.0/dev/Oxygen.html?model=BiDAF%20+%20Self%20Attention%20+%20ELMo%20(single%20model)%20(Allen%20Institute%20for%20Artificial%20Intelligence%20[modified%20by%20Stanford])&version=v2.0

MultiNLI: https://www.nyu.edu/projects/bowman/multinli/

RACE: https://arxiv.org/pdf/1704.04683.pdf

Quora Question Pairs: https://www.quora.com/q/quorad....ata/First-Quora-Data

CoLA: https://arxiv.org/pdf/1805.12471.pdf

Thanks for watching! Please Subscribe!

Plausible text generation has been around for a couple of years, but how does it work - and what's next? Rob Miles on Language Models and Transformers.

More from Rob Miles: http://bit.ly/Rob_Miles_YouTube

AI YouTube Comments: https://youtu.be/XyMdpcAPnZc

Thanks to Nottingham Hackspace for providing the filming location: http://bit.ly/notthack

https://www.facebook.com/computerphile

https://twitter.com/computer_phile

This video was filmed and edited by Sean Riley.

Computer Science at the University of Nottingham: https://bit.ly/nottscomputer

Computerphile is a sister project to Brady Haran's Numberphile. More at http://www.bradyharan.com

Slides: https://sebastianraschka.com/p....df/lecture-notes/sta

-------

This video is part of my Introduction of Deep Learning course.

Next video: https://youtu.be/LOCzBgSV4tQ

The complete playlist: https://www.youtube.com/playli....st?list=PLTKMiZHVd_2

A handy overview page with links to the materials: https://sebastianraschka.com/b....log/2021/dl-course.h

-------

If you want to be notified about future videos, please consider subscribing to my channel: https://youtube.com/c/SebastianRaschka

Dale’s Blog → https://goo.gle/3xOeWoK

Classify text with BERT → https://goo.gle/3AUB431

Over the past five years, Transformers, a neural network architecture, have completely transformed state-of-the-art natural language processing. Want to translate text with machine learning? Curious how an ML model could write a poem or an op ed? Transformers can do it all. In this episode of Making with ML, Dale Markowitz explains what transformers are, how they work, and why they’re so impactful. Watch to learn how you can start using transformers in your app!

Chapters:

0:00 - Intro

0:51 - What are transformers?

3:18 - How do transformers work?

7:41 - How are transformers used?

8:35 - Getting started with transformers

Watch more episodes of Making with Machine Learning → https://goo.gle/2YysJRY

Subscribe to Google Cloud Tech → https://goo.gle/GoogleCloudTech

#MakingwithMachineLearning #MakingwithML

product: Cloud - General; fullname: Dale Markowitz; re_ty: Publish;

First Principles of Computer Vision is a lecture series presented by Shree Nayar who is faculty in the Computer Science Department, School of Engineering and Applied Sciences, Columbia University. Computer Vision is the enterprise of building machines that “see.” This series focuses on the physical and mathematical underpinnings of vision and has been designed for students, practitioners and enthusiasts who have no prior knowledge of computer vision.

Artificial Neural Networks 3D simulation.

Subscribe to this YouTube channel or connect on:

Web: https://www.cybercontrols.org/

LinkedIn: https://www.linkedin.com/in/de....nis-dmitriev-b6a9599

Support on Patreon: https://www.patreon.com/deep_robotics

Support on PayPal, user: denis.y.dmitriev@gmail.com

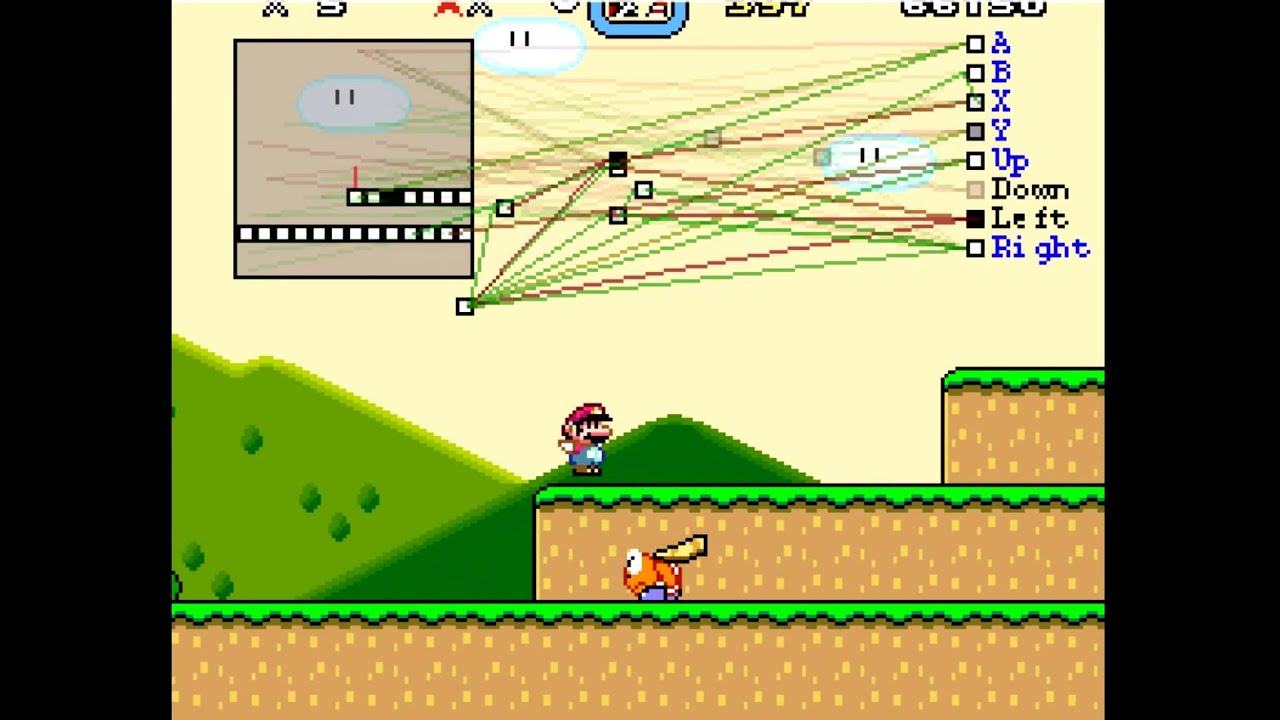

MarI/O is a program made of neural networks and genetic algorithms that kicks butt at Super Mario World.

Source Code: http://pastebin.com/ZZmSNaHX

"NEAT" Paper: http://nn.cs.utexas.edu/downlo....ads/papers/stanley.e

Some relevant Wikipedia links:

https://en.wikipedia.org/wiki/Neuroevolution

https://en.wikipedia.org/wiki/....Evolutionary_algorit

https://en.wikipedia.org/wiki/....Artificial_neural_ne

BizHawk Emulator: http://tasvideos.org/BizHawk.html

SethBling Twitter: http://twitter.com/sethbling

SethBling Twitch: http://twitch.tv/sethbling

SethBling Facebook: http://facebook.com/sethbling

SethBling Website: http://sethbling.com

SethBling Shirts: http://sethbling.spreadshirt.com

Suggest Ideas: http://reddit.com/r/SethBlingSuggestions

Music at the end is Cipher by Kevin MacLeod

This is a report of a software project that created the conditions for evolution in an attempt to learn something about how evolution works in nature. This is for the programmer looking for ideas for interdisciplinary programming projects, or for anyone interested in how evolution and natural selection work.

Before commenting on the religious/theological implications of this simulation, please note that this video in no way purports to explain all the mysteries of life and the universe.

GitHub: https://github.com/davidrmiller/biosim4

Backpropagation is the method we use to optimize parameters in a Neural Network. The ideas behind backpropagation are quite simple, but there are tons of details. This StatQuest focuses on explaining the main ideas in a way that is easy to understand.

NOTE: This StatQuest assumes that you already know the main ideas behind...

Neural Networks: https://youtu.be/CqOfi41LfDw

The Chain Rule: https://youtu.be/wl1myxrtQHQ

Gradient Descent: https://youtu.be/sDv4f4s2SB8

LAST NOTE: When I was researching this 'Quest, I found this page by Sebastian Raschka to be helpful: https://sebastianraschka.com/f....aq/docs/backprop-arb

For a complete index of all the StatQuest videos, check out:

https://statquest.org/video-index/

If you'd like to support StatQuest, please consider...

Buying my book, The StatQuest Illustrated Guide to Machine Learning:

PDF - https://statquest.gumroad.com/l/wvtmc

Paperback - https://www.amazon.com/dp/B09ZCKR4H6

Kindle eBook - https://www.amazon.com/dp/B09ZG79HXC

Patreon: https://www.patreon.com/statquest

...or...

YouTube Membership: https://www.youtube.com/channe....l/UCtYLUTtgS3k1Fg4y5

...a cool StatQuest t-shirt or sweatshirt:

https://shop.spreadshirt.com/s....tatquest-with-josh-s

...buying one or two of my songs (or go large and get a whole album!)

https://joshuastarmer.bandcamp.com/

...or just donating to StatQuest!

https://www.paypal.me/statquest

Lastly, if you want to keep up with me as I research and create new StatQuests, follow me on twitter:

https://twitter.com/joshuastarmer

0:00 Awesome song and introduction

3:55 Fitting the Neural Network to the data

6:04 The Sum of the Squared Residuals

7:23 Testing different values for a parameter

8:38 Using the Chain Rule to calculate a derivative

13:28 Using Gradient Descent

16:05 Summary

#StatQuest #NeuralNetworks #Backpropagation

This video uses a spatial analogy to explore why deep neural networks are more powerful than shallow ones. This is part 4 in my deep learning series: https://www.youtube.com/playli....st?list=PLbg3ZX2pWlg We'll explore what neurons are doing individually and as a group to "understand" perceptions. It leads us to the Manifold Hypothesis.

Ms. Coffee Bean appears with the definitive introduction to Graph Neural Networks! Or short: GNNs. Because graphs are everywhere (almost).

▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

🔥 Optionally, pay us a coffee to boost our Coffee Bean production! ☕

Patreon: https://www.patreon.com/AICoffeeBreak

Ko-fi: https://ko-fi.com/aicoffeebreak

▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

Outline:

* 00:00 Graphs are everywhere!

* 02:32 GNNs explained

* 07:25 GNNs applications

🔗 Links:

YouTube: https://www.youtube.com/AICoffeeBreak

Twitter: https://twitter.com/AICoffeeBreak

Reddit: https://www.reddit.com/r/AICoffeeBreak/

#AICoffeeBreak #MsCoffeeBean #GCN

❤️ Check out Weights & Biases and sign up for a free demo here: https://www.wandb.com/papers

❤️ Their mentioned post is available here: https://wandb.ai/wandb/in-betw....een/reports/-Overvie

📝 The paper "Robust Motion In-betweening" is available here:

- https://static-wordpress.akama....ized.net/montreal.ub

- https://montreal.ubisoft.com/e....n/automatic-in-betwe

Dataset: https://github.com/XefPatterso....n/Ubisoft-LaForge-An

🙏 We would like to thank our generous Patreon supporters who make Two Minute Papers possible:

Aleksandr Mashrabov, Alex Haro, Alex Serban, Alex Paden, Andrew Melnychuk, Angelos Evripiotis, Benji Rabhan, Bruno Mikuš, Bryan Learn, Christian Ahlin, Eric Haddad, Eric Lau, Eric Martel, Gordon Child, Haris Husic, Jace O'Brien, Javier Bustamante, Joshua Goller, Kenneth Davis, Lorin Atzberger, Lukas Biewald, Matthew Allen Fisher, Michael Albrecht, Nikhil Velpanur, Owen Campbell-Moore, Owen Skarpness, Ramsey Elbasheer, Robin Graham, Steef, Taras Bobrovytsky, Thomas Krcmar, Torsten Reil, Tybie Fitzhugh.

If you wish to support the series, click here: https://www.patreon.com/TwoMinutePapers

Meet and discuss your ideas with other Fellow Scholars on the Two Minute Papers Discord: https://discordapp.com/invite/hbcTJu2

Thumbnail background image credit: https://pixabay.com/images/id-2122473/

Károly Zsolnai-Fehér's links:

Instagram: https://www.instagram.com/twominutepapers/

Twitter: https://twitter.com/twominutepapers

Web: https://cg.tuwien.ac.at/~zsolnai/

#gamedev